HPC Specialist Solutions Architect

Role details

Job location

Tech stack

Job description

We are seeking a Specialist HPC Infrastructure Solutions Architect to design, build, and optimize next-generation high-performance computing (HPC) platforms for AI, simulation, and large-scale data processing workloads. The ideal candidate combines deep knowledge of cloud-native architecture, Kubernetes orchestration, networking, and HPC system design with hands-on experience implementing NVIDIA GPU-based compute environments and MLOps toolchains. This role sits at the intersection of infrastructure engineering, accelerated computing, and AI systems design, shaping the foundation for high-throughput, low-latency distributed workloads in cloud environment.

You're welcome to work remotely from any EU country.

Your responsibilities will include:

- Architect and implement scalable HPC clusters optimized for AI, simulation, and distributed training, leveraging container orchestration frameworks and schedulers (e.g., Kubernetes, Slurm).

- Design and integrate GPU-accelerated compute infrastructures featuring NVIDIA Hopper, Blackwell architectures, NVLink/NVSwitch, and InfiniBand/RoCE Interconnects.

- Deploy, and manage GPU Operator and Network Operator stacks for automated lifecycle management of GPU and high-speed networking components.

- Design and validate cloud HPC environments, focusing on low-latency, high-bandwidth networking, multi-GPU scaling, and efficient workload scheduling.

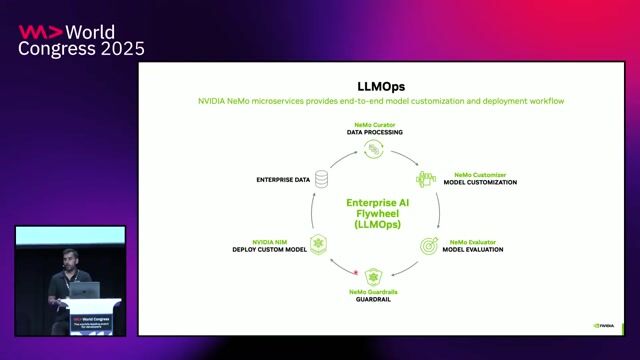

- Lead reference architectures for AI/ML model training, data pipelines, and MLOps integrations using modern observability and CI/CD tooling.

- Collaborate with hardware vendors (e.g., NVIDIA) and cloud providers to evaluate and optimize emerging HPC and GPU technologies.

- Benchmark system performance, identify bottlenecks, and tune resource utilization across compute, network, and storage tiers.

- Provide expert-level technical guidance to customers, internal teams, and partners on HPC architecture patterns, operational excellence reviews and customer engagements

Requirements

Do you have experience in Terraform?, Do you have a Master's degree?, * Bachelor's or Master's degree in Computer Science, Engineering, or a related field (Ph.D. a plus)

- 3+ years of hands-on experience architecting HPC or large-scale GPU clusters.

- Expertise in Linux systems, Kubernetes, container runtimes (containers, CRI-O, Docker), and related CI/CD practices.

- Strong understanding of HPC networking protocols and RDMA stacks (InfiniBand, NVLink/NVSwitch)

- Deep understanding of storage and I/O optimization for large datasets (Ceph, Lustre, NFS, GPUDirect Storage)

- Familiarity with Terraform, Ansible, Helm, and GitOps workflows.

- Strong scripting skills in Python or Bash for automation and tool integration.

- Excellent communication and documentation skills; ability to lead design

- reviews and customer engagements

It will be an added bonus if you have:

- Proficient with NVIDIA GPU ecosystem: GPU Operator, MIG, DCGM, NCCL, Nsight, and CUDA stack management.

- Experience designing or managing AI/ML pipelines via MLflow, Kubeflow, NeMo, or similar frameworks.

- Experience with cloud-native HPC offerings (Slurm, LFS, PBS, etc.).

- Background in designing multi-tenant GPU infrastructures or AI training farms.

- Exposure to distributed ML frameworks (PyTorch DDP, DeepSpeed, Megatron).

- Knowledge of observability for HPC (Prometheus, DCGM Exporter, Grafana, NVIDIA NGC monitoring tools)

- Contribution to open-source HPC/CUDA/Kubernetes projects is a strong plus

Benefits & conditions

- Competitive salary and comprehensive benefits package.

- Opportunities for professional growth within Nebius.

- Flexible working arrangements.

- A dynamic and collaborative work environment that values initiative and innovation.

We're growing and expanding our products every day. If you're up to the challenge and are excited about AI and ML as much as we are, join us!