Kafka Confluent Solution Architect-6months-London

Role details

Job location

Tech stack

Job description

Kirtana consulting is looking for Kafka Confluent Solution Architect role for 6months rolling contract in London.

Job description:

Role Title: Confluent Solution Architect

Minimum number of relevant years of experience: 8+ Years

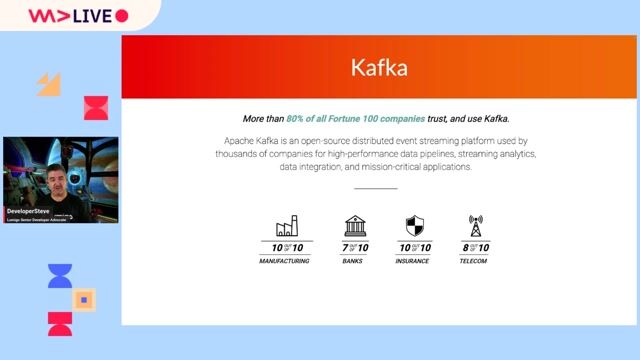

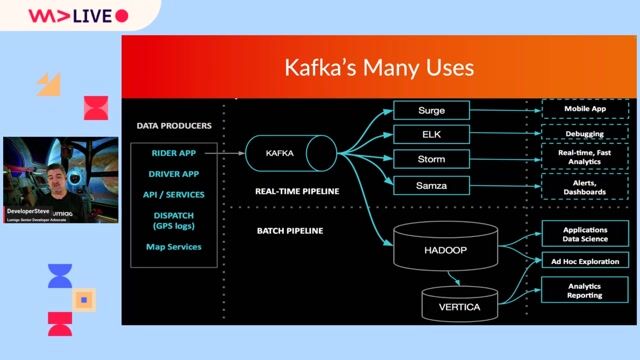

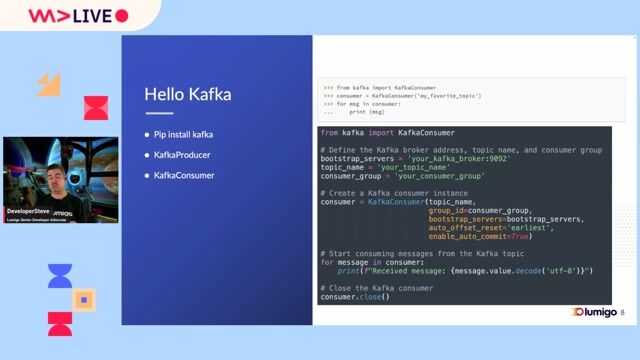

As a Confluent Solution Architect, you will be responsible for designing, developing, and maintaining scalable Real Time data pipelines and integrations using Kafka and Confluent components. You will collaborate with data engineers, architects, and DevOps teams to deliver robust streaming solutions.

Mandatory skills:

8+ years of hands-on experience with Kafka (any distribution:

8+ open-source, Confluent, Cloudera, AWS MSK, etc.)

Strong proficiency in Java, Python, or Scala Solid understanding of event-driven architecture and data streaming patterns Experience deploying Kafka on cloud platforms such as AWS, GCP, or Azure Familiarity with Docker, Kubernetes, and CI/CD pipelines Excellent problem-solving and communication abilities

Desired skills*

Candidates with experience in Confluent Kafka and its ecosystem will be given preference:

Experience with Kafka Connect, Kafka Streams, KSQL, Schema Registry, REST Proxy, Confluent Control Center Hands-on with Confluent Cloud services, including ksqlDB Cloud and Apache Flink Familiarity with Stream Governance, Data Lineage, Stream Catalog, Audit Logs, RBAC Confluent certifications (Developer, Administrator, or Flink Developer) Experience with Confluent Platform, Confluent Cloud managed services, multi-cloud deployments, and Confluent for Kubernetes Knowledge of data mesh architectures, KRaft migration, and modern event streaming patterns Exposure to monitoring tools (Prometheus, Grafana, Splunk) Experience with data lakes, data warehouses, or big data ecosystems

Personal:

High analytical skills

A high degree of initiative and flexibility High customer orientation High quality awareness Excellent verbal and written communication skills

Requirements

8+ years of hands-on experience with Kafka (any distribution:

8+ open-source, Confluent, Cloudera, AWS MSK, etc.)

Strong proficiency in Java, Python, or Scala Solid understanding of event-driven architecture and data streaming patterns Experience deploying Kafka on cloud platforms such as AWS, GCP, or Azure Familiarity with Docker, Kubernetes, and CI/CD pipelines Excellent problem-solving and communication abilities

Desired skills*

Candidates with experience in Confluent Kafka and its ecosystem will be given preference:

Experience with Kafka Connect, Kafka Streams, KSQL, Schema Registry, REST Proxy, Confluent Control Center Hands-on with Confluent Cloud services, including ksqlDB Cloud and Apache Flink Familiarity with Stream Governance, Data Lineage, Stream Catalog, Audit Logs, RBAC Confluent certifications (Developer, Administrator, or Flink Developer) Experience with Confluent Platform, Confluent Cloud managed services, multi-cloud deployments, and Confluent for Kubernetes Knowledge of data mesh architectures, KRaft migration, and modern event streaming patterns Exposure to monitoring tools (Prometheus, Grafana, Splunk) Experience with data lakes, data warehouses, or big data ecosystems

Personal:

High analytical skills

A high degree of initiative and flexibility High customer orientation High quality awareness Excellent verbal and written communication skills