Data Reliability Engineer II

Sync NI

2 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Tech stack

Java

Artificial Intelligence

Airflow

Amazon Web Services (AWS)

Azure

Big Data

Google BigQuery

Cloud Computing

Cloud Engineering

Cloud Storage

Computer Programming

Data as a Services

Information Engineering

Data Fusion

Data Governance

Data Infrastructure

Data Loss

Data Security

Data Systems

Data Warehousing

Relational Databases

Data Flow Control

Hadoop

Python

NumPy

Reliability Engineering

Cloud Services

DataOps

Software Engineering

Data Streaming

Data Logging

Data Processing

Scripting (Bash/Python/Go/Ruby)

Google Cloud Platform

Spark

GIT

Pandas

Containerization

PySpark

Kubernetes

Information Technology

Kafka

Splunk

New Relic (SaaS)

Appdynamics

Software Version Control

Docker

ELK

Job description

A crucial role in CME's Cloud transformation, the dRE II will be aligned to data product pods ensuring that our data infrastructure is reliable, scalable, and efficient as the GCP data footprint expands rapidly. Accountabilities:

- Automate data tasks on Google Cloud Platform (GCP).

- Work with data domain owners, data scientists, and other stakeholders to ensure that data is consumed effectively on GCP.

- Design, build, secure, and maintain data infrastructure, including data pipelines, databases, data warehouses, and data processing platforms on GCP.

- Measure and monitor the quality of data on GCP data platforms.

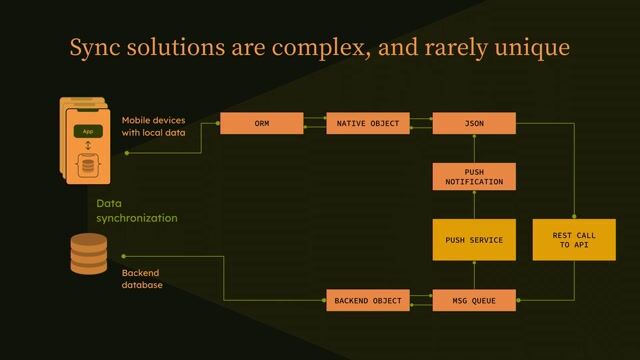

- Implement robust monitoring and alerting systems to proactively identify and resolve issues in data systems.

- Respond to incidents promptly to minimize downtime and data loss.

- Develop automation scripts and tools to streamline data operations and make them scalable to accommodate growing data volumes and user traffic.

- Optimize data systems to ensure efficient data processing, reduce latency, and improve overall system performance.

- Collaborate with data and infrastructure teams to forecast data growth and plan for future capacity requirements.

- Ensure data security and compliance with data protection regulations.

- Implement best practices for data access controls and encryption.

- Collaborate with data engineers, data scientists, and software engineers to understand data requirements, troubleshoot issues, and support data-driven initiatives.

- Continuously assess and improve data infrastructure and data processes to enhance reliability, efficiency, and performance.

- Maintain clear and up-to-date documentation related to data systems, configurations, and standard operating procedures.

Requirements

- Education: Bachelor's or Master's degree in Computer Science, Software Engineering, Data Science, or a related field, or equivalent practical experience.

- Professional Experience: Experience as a Site Reliability Engineer or a similar role with a strong focus on data infrastructure management.

- Methodologies: Understanding of Site Reliability Engineering (SRE) practices.

- Data Technologies: Proficiency in data technologies such as relational databases, data warehousing, big data platforms (e.g., Hadoop), data streaming (e.g., Kafka), and cloud services (e.g., AWS, GCP, Azure).

- Programming Skills: Programming skills in languages like Python (NumPy, pandas, PySpark), Java (Core Spark with Java, functional interface, collections), or Scala with experience in automation and scripting.

- Infrastructure Tools: Experience with containerization and orchestration tools like Docker and Kubernetes is a plus.

- Compliance: Experience with data governance, data security, and compliance best practices on GCP.

- Software Development: Understanding of software development methodologies and best practices, including version control (e.g., Git) and CI/CD pipelines.

- Cloud Computing: Any experience in cloud computing and data-intensive applications and services, ideally Google Cloud Platform (GCP) would be highly beneficial.

- Quality Assurance: Experience with data quality assurance and testing on GCP.

- GCP Data Services: Proficiency with GCP data services (BigQuery, Dataflow, Data Fusion, Dataproc, Cloud Composer, Pub/Sub, Google Cloud Storage).

- Monitoring Tools: Understanding of logging and monitoring using tools such as Cloud Logging, ELK Stack, AppDynamics, New Relic, and Splunk.

- Advanced Tech: Knowledge of AI and ML tools is a plus.

- Certifications: Google Associate Cloud Engineer or Data Engineer certification is a plus.

- Domain Specifics: Experience in data engineering or data science.

Benefits & conditions

- Bonus Programme

- Equity Programme

- Employee Stock Purchase Plan (ESPP)

- Private Medical and Dental coverage

- Mental Health Benefit Programme

- Group Pension Plan

- Income Protection

- Life Assurance

- Cycle To Work

- EV Car Benefit Scheme

- Gym Membership

- Family Leave

- Education Assistance - MBA/Advanced Degree/Bachelor Degree

- Ongoing Employee Development Training/Certification

- Hybrid Working