GCP Data Engineer - DevOps | Java | Microservices

Middleware Systems

2 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Tech stack

Java

Artificial Intelligence

Airflow

Big Data

Google BigQuery

Cloud Computing

Cloud Storage

Computer Programming

Continuous Integration

Information Engineering

Data Governance

ETL

Data Security

DevOps

Data Flow Control

Github

Performance Tuning

Scrum

Ansible

Prometheus

SQL Databases

Data Processing

Google Cloud Platform

Grafana

Spark

Spring-boot

Event Driven Architecture

PySpark

Gitlab-ci

Kubernetes

Information Technology

Kafka

Data Management

REST

Terraform

Data Pipelines

Docker

Jenkins

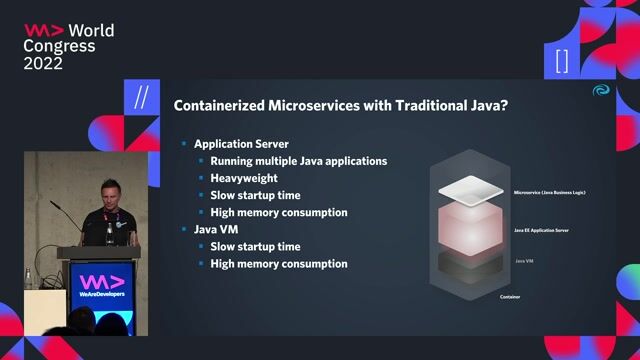

Microservices

Job description

We are looking for an experienced GCP Data Engineer with strong expertise in Google Cloud Platform, DevOps practices, Java development, and Microservices architecture. The candidate will be responsible for designing, building, and maintaining scalable data pipelines and cloud-native applications while ensuring efficient CI/CD and automation practices., * Design, build, and maintain scalable data pipelines on GCP

- Develop and manage ETL/ELT pipelines using cloud-native tools

- Build microservices using Java/Spring Boot

- Work with Big Data technologies and cloud data platforms

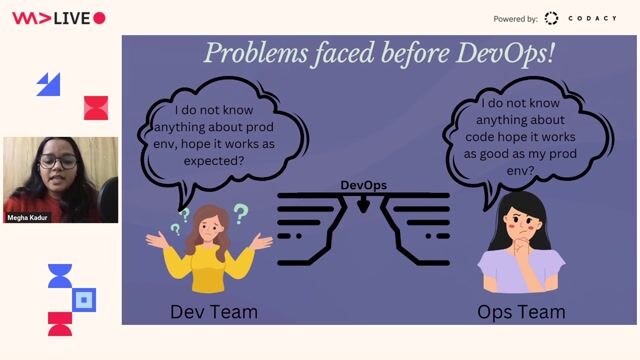

- Implement CI/CD pipelines and DevOps automation

- Collaborate with data scientists, analysts, and engineering teams

- Optimize data processing performance and reliability

- Ensure data security, governance, and compliance

- Monitor and troubleshoot data pipelines and microservices

Required SkillsGCP Technologies

- Strong experience with Google Cloud Platform

- BigQuery

- Cloud Dataflow

- Cloud Composer/Airflow

- Pub/Sub

- Cloud Storage

- Dataproc

Data Engineering

- ETL/ELT pipeline development

- Data modelling and warehousing

- SQL and performance optimization

- Batch and streaming data pipelines

Programming

- Java (Java 8 or above)

- Spring Boot

- REST API development

- Microservices architecture

DevOps

- CI/CD tools (Jenkins/GitLab CI/GitHub Actions)

- Docker

- Kubernetes

- Infrastructure as Code (Terraform/Ansible)

- Monitoring tools (Prometheus/Grafana/Stackdriver)

Requirements

- Experience with Kafka or event-driven architecture

- Experience with Data Governance/Data Quality

- Knowledge of Spark/PySpark

- Experience with Agile/Scrum

Education

Bachelor's or Master's degree in Computer Science, Engineering, or related field

Good to Have

- GCP Certifications

- Experience working in large-scale cloud data platforms

- Exposure to AI/ML pipelines on GCP