Data & Analytics Engineer (Databricks Lakehouse)

Role details

Job location

Tech stack

Job description

We're looking for a self-motivated and results-driven Data & Analytics Engineer to join a forward-thinking organisation during an exciting transformation of its global data capabilities.

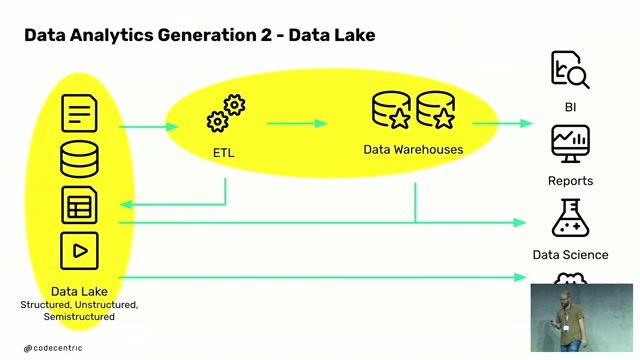

In this role, you'll take ownership of designing, building, and optimising reliable data pipelines and governed analytical datasets within a Databricks Lakehouse environment. You'll play a key role in transforming raw data into high-quality, business-ready insights while ensuring strong data governance, quality, and performance.

You'll collaborate closely with business stakeholders and delivery partners to gather requirements, enhance processes, and deliver scalable, high-impact data solutions., * Build and maintain batch/streaming pipelines (PySpark, Spark SQL, DLT) across bronze, silver, and gold layers

- Implement CDC, incremental loads, and performance tuning (Z-Order, OPTIMIZE, VACUUM)

- Develop curated datasets and dimensional models for BI

- Optimise SQL warehouse performance and maintain data models

- Ensure data quality, governance, and documentation standards

- Collaborate with stakeholders and support CI/CD processes

Requirements

- Strong Databricks and Spark (PySpark/Spark SQL) experience

- Knowledge of Delta Lake, DLT, and streaming pipelines

- Experience with data modelling and SQL for analytics

- Familiarity with CI/CD, Git, and infrastructure as code

- Understanding of data governance and quality frameworks

- Exposure to BI tools (eg Tableau)