Data Scientist

Role details

Job location

Tech stack

Job description

We are looking for a Data Scientist to join our end-to-end data projects within IoT and data platform environments. You will work across the entire lifecycle: from exploratory analysis and ML model design to GenAI solutions, through to deployment and production maintenance.

Depending on the project, you will operate within an ecosystem primarily based on AWS (IoT, Lambda, S3, Redshift, Glue), although we also work with Azure.

We deeply value your technical expertise, but also that innate curiosity to "tinker" (cacharreo) and constantly learn new things. At GALEO, Python is our universal language: used daily by 100% of the team-from Data Engineers and Scientists to IoT, Systems, and Web specialists. If you are motivated by experimenting and evolving within a shared and dynamic stack, you'll fit right in.

What you will do on a daily basis

- Exploratory Data Analysis (EDA): Analysing datasets to identify relevant patterns, trends, anomalies, and correlations to guide solution design.

- Machine Learning Model Development: Designing, training, and evaluating predictive models and ML/DL algorithms (supervised, unsupervised, time series) tailored to each project's needs.

- Generative AI Solutions: Designing and implementing solutions based on LLMs, including RAG, prompt engineering, fine-tuning, and orchestration with frameworks such as LangChain.

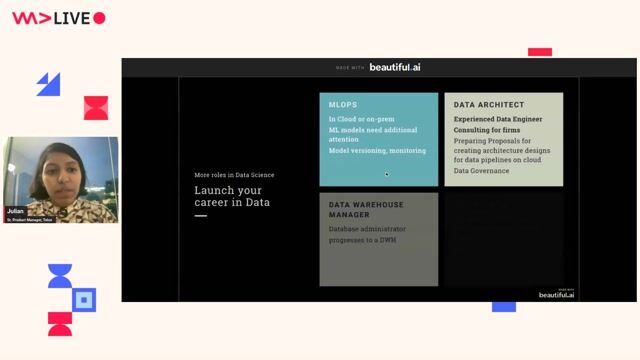

- Model Deployment & Production (MLOps): Taking models from experimentation to production, including the creation of training, monitoring, and retraining pipelines.

- Data Pipeline Collaboration: Working alongside the Data Engineering team to design ETLs and data flows that feed the models, understanding the full data lifecycle.

- Communicating Results: Translating complex technical findings into clear reports, charts, and interactive dashboards for management and non-technical departments.

- Continuous Optimisation: Iterating on production models, improving performance, and proposing new approaches based on results and business needs.

Requirements

- Degree in Computer Science, Telecommunications Engineering, Mathematics, Physics, Statistics, or a related technical field.

- Proficiency in Python and its data science ecosystem (pandas, NumPy, scikit-learn, matplotlib/seaborn).

- Experience with ML/DL frameworks: scikit-learn, TensorFlow, PyTorch, or similar.

- Solid knowledge and experience in SQL.

- Experience in Exploratory Data Analysis (EDA) and data preparation for modelling.

- Comprehensive understanding of supervised and unsupervised Machine Learning algorithms.

- Understanding of data pipelines (ETL) and the ability to collaborate with Data Engineering teams.

- Demonstrable experience or interest in production model deployment (MLOps).

- Strong problem-solving skills, including the ability to identify, analyse, and develop viable solutions.

- Excellent communication and presentation skills., * At least 2 years of experience as a Data Scientist.

- Experience with LLMs and GenAI (LangChain, Anthropic/OpenAI APIs, prompt engineering, RAG, fine-tuning).

- Experience with PySpark and distributed processing.

- Data processing experience in cloud environments (AWS, Azure, and/or GCP).

- Proficiency in Forecasting, NLP, and Reinforcement Learning algorithms.

- Knowledge of mathematical optimisation algorithms/solvers (e.g., Gurobi).

- Previous experience in IT consultancy.

- Fluency with visualisation tools, preferably PowerBI.

- Proficiency with Git and Docker.

- Knowledge of Agile project management best practices.

- Intermediate English (B2)., We operate 100% remotely. Your ability to work as part of a team and your communication skills are fundamental to making this formula work.

We don't expect you to master everything. We value what isn't written here: your ability to absorb new knowledge, your experience with different technologies, and your motivation to keep learning from every technological ecosystem.

Benefits & conditions

At GALEO, we value our team and want to offer the best conditions for you to develop your career in an environment that adapts to your needs:

- Remote work and flexible hours.

- Holiday & Time off: 23 days of annual leave, Christmas Eve and New Year's Eve off, 2 months of intensive summer hours, and 2 "long weekend" days.

- Competitive salary.

- Referral Rewards: We reward you for referring talent to GALEO. Over 70% of our employees come from internal references-which says a lot about how we work!

- Growth & Training: A professional development plan including certifications: AWS, Azure, GCP, Kubernetes, Terraform, Confluent, Databricks, and more.

- Work Environment: A professional, expert, flexible, and friendly atmosphere. We work in small teams with a remote format tailored to you.

- Innovation: Innovative projects in key sectors: Defence, Energy, Smart Buildings, Transport, Healthcare, Industry, and Infrastructure.

- Techie Vibe: We love staying up-to-date with the latest tech. We are a close-knit team-essentially a big family.

- Trust: We trust you and your commitment to meeting objectives. We believe in diversity as a driver for innovation.