AI/ML Engineer (Databricks, MLOps, MLflow, AutoML)

Role details

Job location

Tech stack

Job description

We're hiring multiple AI/ML Engineer with strong Databricks experience to design, build, and deploy machine learning solutions at scale. You'll work across data engineering, data science, and platform teams to deliver production-ready models using the Databricks ecosystem.

My client is a Databricks Partner consultancy, we're looking for multiple contractors to join on an initial 6 months engagement, fully remote and Outside of IR35.

Tech Stack: Databricks (core platform), Apache Spark/PySpark , MLops, MLflow, AutoML, Feature Store, Model Serving, Delta Lake, * Build and deploy ML models using Databricks (ML, Workflows, Feature Store)

- Develop scalable pipelines using Apache Spark (PySpark)

- Train, evaluate, and optimise models for real-world use cases

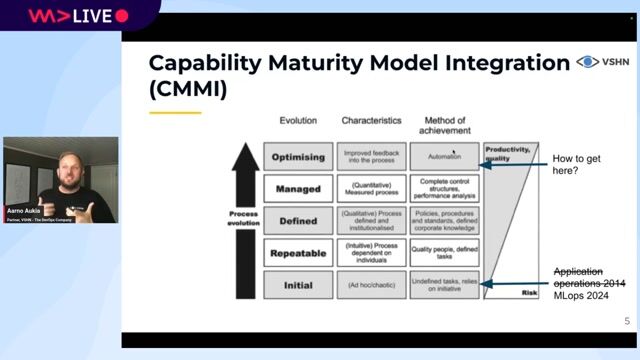

- Implement MLOps best practices (CI/CD, monitoring, versioning)

- Work with large-scale data in Delta Lake

- Collaborate with data engineers to productionise pipelines

- Deploy models via APIs or batch scoring workflows

- Ensure models are reliable, explainable, and performant

- Contribute to architecture decisions across the data platform

Requirements

- Strong hands-on experience with Databricks

- Proven ML experience (classification, regression, NLP, or similar)

- Solid Python skills (Pandas, NumPy, Scikit-learn)

- Experience with PySpark/Spark

- Experience deploying ML models into production environments

- Understanding of MLOps frameworks (MLflow, CI/CD pipelines)

- Experience working with cloud platforms (AWS, Azure, or GCP)

- Strong SQL and data modelling knowledge

Desirable Experience

- Experience with Databricks MLflow and Feature Store

- Exposure to LLMs/GenAI (eg RAG pipelines, fine-tuning)

- Experience with streaming data (Kafka, Spark Streaming)

- Knowledge of Docker/Kubernetes

- Experience with Azure ML or SageMaker

- Familiarity with data governance and security best practices

What We're Looking For

- Someone who can bridge data engineering and data science

- Comfortable working in a production-focused environment

- Strong communicator who can work with technical and non-technical stakeholders

- Pragmatic mindset - focused on delivering business value, not just models