DevOps Engineer

Role details

Job location

Tech stack

Job description

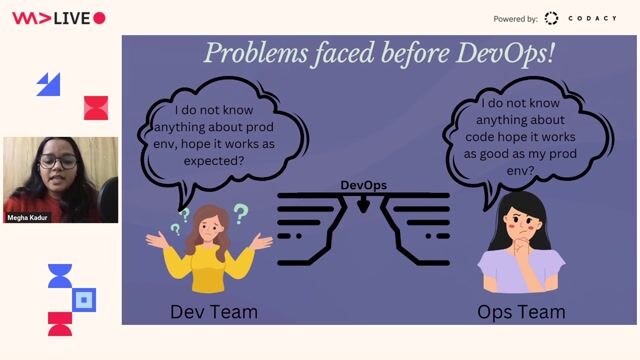

The DevOps Engineer will establish the organization's data infrastructure, promoting engineering best practices on data management, and keeping the high bar for data quality. Responsibilities include but not limited to: Work with our data customers to provide reliable and timely data Be able to choose the appropriate level of data extraction when designing and implementing tables in the data lake Write code that reliably extracts data from REST service calls and then loads into the data lake Contribute to data operations with process documentation, service levels, data quality, performance dashboards, and continuous improvement efforts Implement operational improvements through automation, requiring ingenuity and creativity, within data pipelines Implement standards and procedures, work with the team to ensure conformity to standards and best practices Incident management, triage the activities and work with stakeholders and communicate with timely updates Prepare for and respond to audits for compliance with established standards, policies, guidelines and procedures Collaborate with data engineering and data governance teams in release planning, training, and handoffs Manage processes at various levels of maturity, while maintaining consistent quality and throughput expectations Ensure visibility of all operational metrics, and related issues, to peers and leadership and proactively identify opportunities for operational efficiencies Be part of our support rotation Top Performers will be able to demonstrate: Experience enriching data using third-party data sources

Requirements

Working knowledge of the latest big data technologies and systems Experience building out the tooling and infrastructure to make systems more self-service Knowledge of tools like Databricks and Astronomer/Airflow to run workloads Demonstrable experience of building internal tooling using Scala, ZIO, and Cats Requirements: Bachelor's degree in a technical field 5 years of overall relevant experience 2 years of experience in the following: o Developing extract-transform-load (ETL) o Experience in working in both SQL and Python