Staff Engineer (AI & Automation)

Role details

Job location

Tech stack

Job description

Grafana Labs is seeking a Staff Engineer (AI & Automation) to own the AI agent infrastructure and automation platform that powers our Marketing Operations organization. You'll build multi-agent architectures, LLM integrations, and backend services that connect AI models to internal and third-party data platforms. You'll ship production systems that teams depend on daily.

This is a high-autonomy role where you own the technical direction. You'll identify the highest-leverage problems across Marketing, RevOps, and SDR teams, design the solutions, and ship them. You'll define the technical direction for the automation platform (data models, API contracts, shared libraries, reference architectures) and partner with Data Engineering, GTM Systems, and Field Operations to build scalable, self-service automation that eliminates manual work and drives operational efficiency.

What Youll Be Doing

Agentic Systems & AI Infrastructure

-

Own end-to-end development of multi-agent AI systems, from architecture and implementation through testing, deployment, and ongoing operation

-

Build modular, composable agentic systems using orchestration frameworks (LangChain, CrewAI, Anthropic MCP, or similar) that operate 24/7 across teams

-

Develop reusable agentic skills that agents invoke across interfaces (Slack, dashboards, internal apps, CLIs)

-

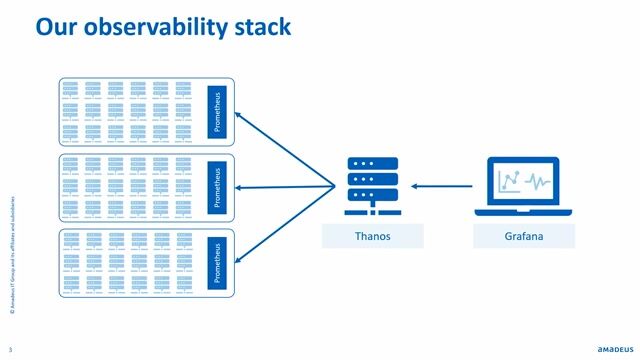

Implement observability and feedback loops including logging, performance metrics, prompt iteration, model evaluation, and cost management

-

Establish governance and compliance standards for AI workflows including access controls, audit trails, PII handling, and human-in-the-loop escalation paths

Systems Integration & Backend Services

-

Build MCP servers, APIs, CLIs, and microservices connecting AI models to business systems (BigQuery, Slack, CRMs, email, calendars, analytics tools)

-

Architect data flows for retrieval-augmented generation (RAG), connecting LLMs to internal knowledge bases, customer data, and real-time business context

-

Build serverless or containerized services (GCP Cloud Functions, Cloud Run) that scale with usage and integrate with Grafanas cloud infrastructure

Automation & Workflow Enablement

-

Partner with RevOps, Demand Generation, Regional Marketing, and SDR teams to scope high-impact automation problems, identify bottlenecks, and build solutions with measurable business outcomes

-

Design and deploy workflows using orchestration tools (n8n, Workato, or custom platforms) with CI/CD, testing, and production reliability standards

-

Build systems designed for self-service with documentation, playbooks, and enablement materials that let partner teams operate independently

We invest heavily in developer productivity. Youll have access to AI coding assistants (Claude Code, Gemini CLI, OpenAI Codex, and others of your choice within security guidelines). We encourage pragmatic AI-assisted development paired with strong code review and quality standards.

Requirements

-

8+ years of software engineering experience with depth in backend development, systems integration, or data/analytics engineering

-

2+ years hands-on experience applying LLMs/AI to production workflows, not just prototypes

-

Strong proficiency in Python and JavaScript/Node.js with Git-based workflows, code review practices, and testing discipline

-

Hands-on experience with LLM frameworks and patterns including prompt engineering, RAG, function calling/tool use, structured output parsing, and evaluation

-

Experience building and operating multi-agent systems at scale including agent decomposition, orchestration patterns (sequential chains, router/dispatcher, parallel fan-out), state management, and production monitoring

-

You diagnose business problems before writing code. You think in workflows and outcomes, not just functions.

-

Deep familiarity with Google Cloud Platform, BigQuery, and serverless/containerized services (Cloud Functions, Cloud Run)

-

Understanding of LLM failure modes and production mitigations including confidence thresholds, fallback logic, human escalation, and cost/latency management

-

Proven ability to identify high-leverage problems, push back on low-impact requests, and deliver end-to-end with minimal direction

-

Fluent with AI-assisted development tools (GitHub Copilot, Cursor, Claude Code). You use AI to build AI systems

-

Clear technical communicator who can explain complex systems in simple terms to both engineers and business stakeholders

Bonus Points

-

Experience with vector databases or retrieval pipelines (Pinecone, Weaviate, ChromaDB, Qdrant, pgvector)

-

Familiarity with marketing or sales platforms (Salesforce, Customer.io, HubSpot, Marketo, Outreach)

-

Experience with frontend frameworks (React, Slack Block Kit) for building user-facing AI tool interfaces

-

Observability tooling for AI systems (LangSmith, Weights & Biases, custom evaluation frameworks)

-

Experience with workflow orchestration platforms (n8n, Temporal, Prefect, Airflow)

-

Familiarity with Model Context Protocol (MCP) or similar standards for connecting AI systems to data sources

-

Prior work automating marketing, sales, or customer success workflows in a B2B SaaS environment

-

Active in open-source communities. Grafana is built on OSS and we value engineers who share that DNA