AI Data Engineer

The Rose

3 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Remote

Tech stack

Java

API

Artificial Intelligence

Airflow

Amazon Web Services (AWS)

Azure

Big Data

Google BigQuery

Databases

Data Validation

Data Cleansing

Information Engineering

Data Infrastructure

ETL

Data Structures

Data Warehousing

Database Queries

Hadoop

Python

PostgreSQL

MongoDB

MySQL

Standard Sql

Data Streaming

Unstructured Data

Workflow Management Systems

Data Processing

Data Storage Management

Google Cloud Platform

Snowflake

Spark

Data Lake

Apache Flink

Google BigQuery

Kafka

Data Management

Machine Learning Operations

Stream Processing

Data Pipelines

Docker

Redshift

Job description

An AI Data Engineer is responsible for designing, building, and managing data infrastructure that supports AI and Machine Learning systems. This role focuses on creating scalable data pipelines, preparing high-quality datasets, and enabling efficient model training and deployment., * Build and maintain data pipelines for AI/ML workflows

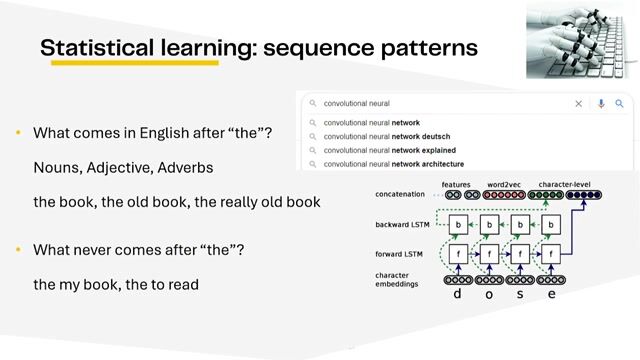

- Collect, clean, and preprocess structured and unstructured data

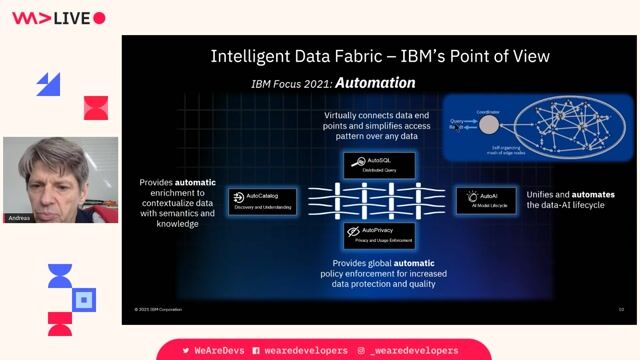

- Design and manage data lakes and data warehouses

- Develop ETL/ELT processes for large-scale data processing

- Optimize data storage and retrieval for AI model performance

- Integrate data from multiple sources (APIs, databases, streaming systems)

- Collaborate with data scientists and AI engineers to provide training datasets

- Implement data validation, quality checks, and governance policies

- Work with real-time and batch data processing systems

- Monitor and troubleshoot data pipeline performance issues, * Languages: Python, Java, SQL

- Big Data: Apache Spark, Hadoop

- Databases: MySQL, PostgreSQL, MongoDB

- Data Warehouses: Redshift, BigQuery, Snowflake

- Streaming: Kafka, Flink

- Orchestration: Apache Airflow

- Platforms: AWS, Azure, Google Cloud Platform

Requirements

- Strong knowledge of SQL for data querying and transformation

- Proficiency in Python or Java for data processing

- Understanding of data engineering concepts (ETL, data pipelines)

- Familiarity with databases (MySQL, PostgreSQL, MongoDB)

- Knowledge of data structures and algorithms

- Understanding of data preprocessing techniques for ML

- Problem-solving and analytical thinking, * Experience with big data tools (Apache Spark, Hadoop)

- Familiarity with AI/ML workflows and data requirements

- Knowledge of data warehouse tools (Amazon Redshift, Google BigQuery, Snowflake)

- Experience with streaming tools (Kafka, Flink)

- Understanding of MLOps practices

- Experience with cloud platforms like Amazon Web Services, Microsoft Azure, or Google Cloud Platform

- Familiarity with workflow orchestration tools (Apache Airflow)

- Basic knowledge of Docker and Kubernetes