Backend Engineer

Donato Technologies, Inc

Jackson Township, United States of America

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Jackson Township, United States of America

Tech stack

Java

API

Amazon Web Services (AWS)

Azure

C++

Cloud Computing

Code Review

DevOps

Distributed Systems

Fault Tolerance

Python

Enterprise Messaging Systems

Performance Tuning

Redis

Software Engineering

Data Streaming

Systems Integration

Google Cloud Platform

Concurrency

Caching

Parallel Computation

Backend

Kubernetes

Kafka

Stream Processing

Docker

Microservices

Job description

- Design, develop, and maintain scalable, cloudnative backend services

- Build and support eventdriven and asynchronous systems using streaming and messaging platforms(Kafka)

- Engineer lowlatency, highthroughput systems with strong resiliency and fault tolerance

- Optimize performance for compute and dataintensive workloads

- Collaborate with platform, DevOps, and Front office teams to deliver endtoend solutions

- Participate in architecture reviews, code reviews, and production readiness assessments

- Troubleshoot production issues and drive performance, reliability, and scalability improvements

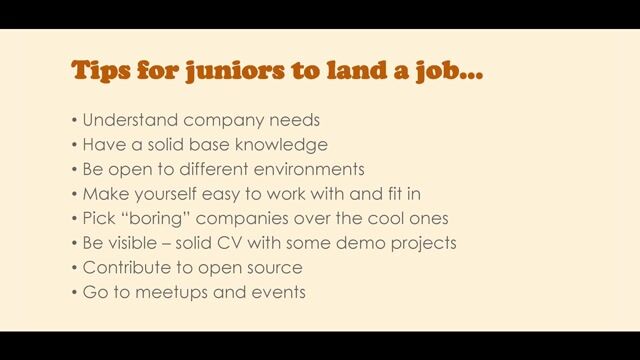

- Contribute to engineering standards, best practices, and mentoring

Requirements

- 6 10+ years of experience in backend software engineering

- Strong proficiency in Java, Python, or similar backend languages

- Solid experience building microservices and REST / async APIs

- Strong understanding of distributed systems, concurrency, and scalability patterns

- Handson experience with cloud platforms (AWS, Azure, or Google Cloud Platform)

- Experience with event streaming / messaging systems (Kafka or equivalent)

- Strong problemsolving skills and ability to work in complex, evolving systems

Good to Have

- HighPerformance Computing (HPC) experience, including performance tuning, parallelism, or computeintensive workloads

- Experience using Redis for caching, pub/sub, or distributed state management

- Strong working knowledge of Apache Kafka, including producers/consumers, schemas, and stream processing

- Experience with Symphonybased integrations or platforms (messaging, workflows, or automation)

- Basic to intermediate exposure to C++, or comfort understanding and modifying C++ code

- Experience with lowlatency / realtime systems

- Exposure to Docker, Kubernetes, and modern CI/CD pipelines