AI/LLM Engineer (GenAI + Agentic AI + Google Cloud Platform Microservices)

Role details

Job location

Tech stack

Job description

We are seeking highly skilled Specialty Software Engineers to support a large-scale AI-driven modernization initiative. This role focuses on building next-generation, cloud-native, low-latency applications. The ideal candidate will design and develop modular AI-driven systems, leveraging modern frameworks and platforms to enable scalable, intelligent enterprise solutions. This position involves working at the intersection of Generative AI (GenAI), Agentic AI, cloud-native microservices architecture, and developer productivity platforms., * Design and develop cloud-native microservices using modern architectural patterns.

- Build high-performance, low-latency applications for enterprise use cases.

- Develop and extend Python-based SDKs and APIs.

- Ensure scalability, reliability, and performance optimization.

- Build and implement Agentic AI systems (NGA/synthetic agents).

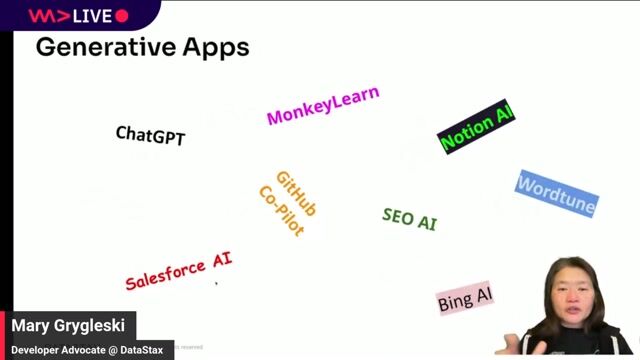

- Implement prompt engineering and model interaction strategies.

- Develop LLM-powered applications using LangChain and LangGraph.

- Work with RAG-based systems and multi-agent orchestration frameworks.

- Contribute to building intelligent workflows using Claude Code, Gemini, and Daven (nice to have).

- Build and deploy applications using Google Cloud Platform (Google Cloud Platform) services.

- Contribute to development efforts around CXK Studio.

- Ensure proper integration with enterprise data pathways and AI pipelines.

- Work with platforms such as Vertex AI and cloud-native AI services.

- Participate in Agile development processes.

- Contribute to continuous integration and deployment practices.

- Ensure code quality through reviews, testing, and best practices.

- Collaborate closely with product teams, AI/ML engineers, and platform/DevOps teams.

Requirements

- Strong proficiency in Python development.

- Experience building cloud-native microservices.

- Experience with SDK development and API integrations.

- Hands-on experience with LangChain/LangGraph and LLM-based applications.

- Strong understanding of distributed systems, API design, and microservices architecture.

- Experience implementing AI-driven applications in production environments.

- Experience working with Large Language Models (LLMs) and prompt engineering techniques.

- Knowledge of RAG (Retrieval Augmented Generation) and vector databases/embeddings (preferred).

- Strong experience with Google Cloud Platform (Google Cloud Platform).

- Understanding of cloud-native architectures and services.

- Familiarity with Vertex AI and cloud-based AI services.

- Additional skills (nice to have): Frontend exposure to React, programming exposure to R, experience in the Financial Services domain, and familiarity with Claude/Gemini ecosystems and Daven tools.

Benefits & conditions

At PTR Global, we understand the importance of your privacy and security. We NEVER ASK job applicants to:

- Pay any fee to be considered for, submitted to, or selected for any opportunity.

- Purchase any product, service, or gift cards from us or for us as part of an application, interview, or selection process.

- Provide sensitive financial information such as credit card numbers or banking information. Successfully placed or hired candidates would only be asked for banking details after accepting an offer from us during our official onboarding processes as part of payroll setup.

Pay Range: $50 - $75

The specific compensation for this position will be determined by several factors, including the scope, complexity, and location of the role, as well as the cost of labor in the market; the skills, education, training, credentials, and experience of the candidate; and other conditions of employment. Our full-time consultants have access to benefits, including medical, dental, vision, and 401K contributions, as well as PTO, sick leave, and other benefits mandated by applicable state or localities where you reside or work.