Senior Data Infrastructure Engineer - Build a (self-hosted) Greenfield Data Lake

Role details

Job location

Tech stack

Job description

You report to the Senior Engineering Manager.

- Design the Data Lake architecture: Define the storage, ingestion, and transformation layers of systems for a self-hosted data platform, selecting the right open-source-first tools for each.

- Build data pipelines: Create reliable pipelines that ingest data from across the company - infrastructure metrics, business systems, application logs, and financial data.

- Model and transform data: Design schemas and transformation layers that make raw data usable, consistent, and queryable.

- Integrate with existing systems: Connect to the company's current data sources (MariaDB, Prometheus, OpenSearch, internal APIs, and others) without disrupting production workloads.

- Operate what you build: Own the reliability and performance of the data platform, including monitoring, alerting and capacity planning. Work closely with our Live Operations and Engineering teams to ensure it remains sustainable.

- Collaborate across teams: Work with Platform, Infrastructure, Network, and Product teams to understand their data and make it accessible.

- Document and share: Maintain clear documentation of the platform architecture, data catalog, and pipeline designs, so the foundation you build is understandable and extensible.

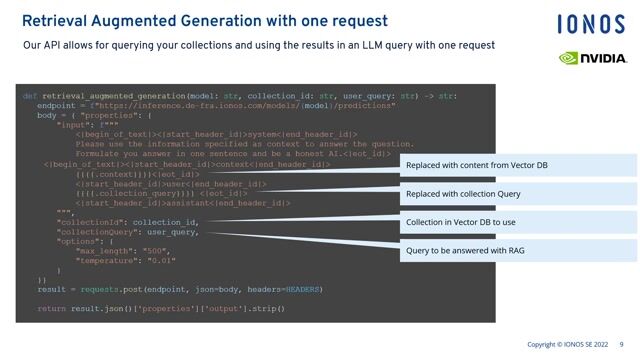

The data platform is greenfield - you'll have significant input into the final choices. As a starting point, we expect the stack to be self-hosted and open-source, in line with how i3D.net operates. Tools we'd expect you to evaluate and build on include:

-

Storage: MinIO (S3-compatible object storage), Apache Iceberg or Delta Lake

-

Processing: Apache Spark, Apache Flink

-

Orchestration: Apache Airflow

-

Query: Trino, ClickHouse

-

Data sources you'll integrate with: MariaDB, Prometheus, OpenSearch, RabbitMQ, internal & external REST APIs

-

Infrastructure: Linux (Debian), Docker, Kubernetes, Ansible

-

You've designed and deployed the core Data Lake architecture on our own infrastructure

-

Data from at least the major source systems is flowing into the platform reliably

-

There's a working transformation layer that makes raw data queryable and usable

-

Other teams can access and explore data without needing your help for every question

-

The platform is documented, monitored, and ready to support analytics use cases as they emerge, * Pragmatic architect: You make sound technical decisions, document your reasoning, and know when "good enough" beats "perfect".

-

Independent operator: You thrive with autonomy. You'll be the first data engineer - you need to drive your own roadmap with input from your manager and stakeholders.

-

Collaborative mindset: You work well across teams to understand data sources and make them accessible.

-

Remote vs Onsite: This is a hybrid role, so you'll spend some time working onsite at our Rotterdam office. If you're already in the Netherlands, great. If not, we're happy to support your move with our relocation services (a valid EU work permit is required).

-

Build from zero: This is a rare greenfield opportunity to design an entire data platform from the ground up, on real hardware, at global scale.

-

Full ownership: You'll make the architectural calls and see them through to production - no layers of approval or committee decisions.

-

Infrastructure, not abstractions: Work with bare metal, your own data centers, and open-source tooling - not cloud dashboards.

-

Competitive Perks: Annual bonus, 25 vacation days (excluding national holidays), travel allowance, and a solid pension plan.

-

Career Growth: Access education reimbursement, career guidance, and opportunities to upskill.

-

Stay Active: Free access to our in-house gym in Rotterdam.

Requirements

- Data engineering depth: 6-8 years' building and operating data pipelines, storage layers, and transformation frameworks in production environments.

- Open-source data stack experience: Hands-on with tools like Spark, Airflow, Trino, or similar - ideally self-hosted rather than managed cloud equivalents.

- Greenfield builder: You have experience building data infrastructure from scratch, not just maintaining existing platforms.

- Strong SQL and data modeling: You can design schemas that balance analytical flexibility with performance.

- Infrastructure-aware: Comfortable with Linux, containers, Kubernetes, and operating your own services. You don't need a cloud console to get things done.