AI Data Architect with Snowflake/AWS

kpg99 inc

Stone Park, United States of America

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Remote

Stone Park, United States of America

Tech stack

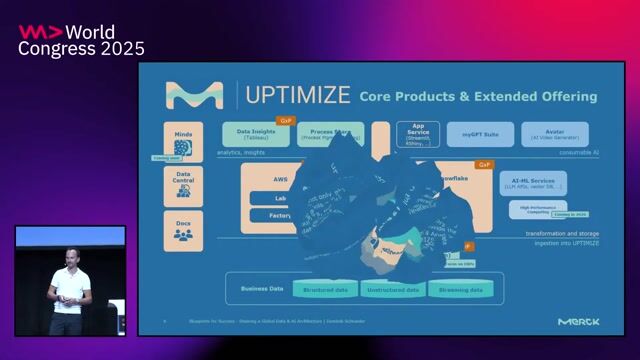

API

Artificial Intelligence

Amazon Web Services (AWS)

Business Analytics Applications

ARM

JIRA

Azure

Cloud Computing

Cloud Computing Security

Data Architecture

Information Leak Prevention

Data Security

Data Warehousing

Linux

DevOps

Python

Power BI

Data Streaming

Tableau

Enterprise Software Applications

Large Language Models

Snowflake

AI Platforms

Information Technology

Cloud Migration

Looker Analytics

GPT

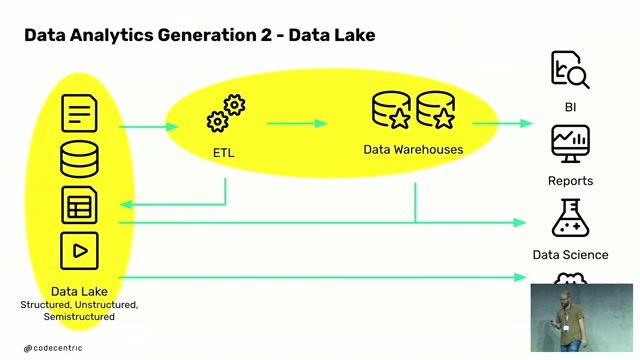

Data Pipelines

Automation Anywhere

Serverless Computing

ServiceNow

Job description

At its core, this is a hands-on platform and architecture role responsible for managing Hyatt's OpenAI/ChatGPT Enterprise environment while also shaping the company's broader data and AI infrastructure strategy (Snowflake + AWS).

What the role actually does:

- Administers and governs enterprise AI platforms (ChatGPT/OpenAI), including user access, security, compliance, and cost control

- Designs and enforces AI usage policies, guardrails, and responsible AI practices in partnership with cybersecurity

- Builds and supports AI-driven solutions (custom GPTs, RAG pipelines, LLM integrations) across business functions

- Acts as a bridge between AI platforms, enterprise data (Snowflake), and cloud infrastructure (AWS)

- Defines and drives enterprise data architecture standards (data flows, models, integration, governance, quality)

- Enables secure data access for AI use cases using Snowflake Cortex and modern data stack tools

- Automates platform operations and workflows using Python, APIs, and cloud-native services

- Integrates AI workflows with enterprise systems like ServiceNow and Jira

- Monitors usage, performance, and risk (security, compliance, hallucination, data leakage)

- Leads internal AI adoption through enablement, training, and community building across teams

- Partners closely with engineering, cybersecurity, data teams, and business stakeholders to deliver scalable AI solutions

Requirements

- Strong problem-solving skills in ambiguous, fast-paced environments

- Experience building scalable data pipelines and analytics solutions

- Familiarity with BI tools (Tableau, Power BI, ThoughtSpot, Looker)

- Experience with cloud migrations (on-prem to AWS/GCP)

- Strong stakeholder management and communication skills

- Ability to manage multiple initiatives across teams

- High attention to detail and commitment to quality

- Experience with large-scale enterprise Snowflake implementations

- Experience across Linux and Windows environments

- Knowledge of networking, cloud security groups, and policies

- Advanced degree in Computer Science or related field (preferred)

Preferred CERTIFICATIONS;

- AI/ML certifications (preferred)

- AWS Certifications (Solutions Architect, Developer, DevOps)

- Other cloud certifications (Azure, GCP) are a plus