Site Reliability Engineer (SRE)

Role details

Job location

Tech stack

Job description

Being as passionate about our people as we are about our mission. We celebrate our employees in many ways, including our "Circle of Awesomeness" award ceremony and day of employee celebration among others! We invest in the growth and development of our team members through ongoing learning opportunities, mentorship programs, internal mobility, and meaningful leadership relationships. We also know that nothing builds trust and collaboration like having fun. We hold an annual Dodgeball for Charity event at our Q2 Stadium in Austin, inviting other local companies to play, and community organizations we support to raise money and awareness together., Q2 is seeking a skilled Senior Site Reliability Engineer (SRE) with strong experience in Snowflake to support, optimize, and maintain our data platform. The ideal candidate will ensure the reliability, performance, scalability, and operational efficiency of Snowflake-based data systems, while partnering closely with Data Engineering, DevOps, and Security teams.

A Typical Day:

Snowflake Platform Operations

- Manage and administer Snowflake environments (warehouses, databases, schemas, roles).

- Monitor system performance, query efficiency, and warehouse utilization.

- Optimize storage and compute costs through proactive analysis and tuning.

- Maintain and support Snowflake tasks, streams, pipes, and Snowpipe ingestion processes.

Reliability & Monitoring

- Implement monitoring, alerting, and dashboards for Snowflake performance and health.

- Troubleshoot data platform issues, latency problems, and ingestion failures.

- Ensure high availability, reliability, and SLAs for Snowflake workloads.

Automation & DevOps

- Automate Snowflake deployments using Terraform, DBT, or similar tools.

- Build and maintain CI/CD pipelines for data platform changes (Kubernetes, ArgoCD, Docker, Helm).

- Create automation scripts (Python/SQL/Bash) for operational tasks and process improvements.

Data Pipeline Reliability

- Support ETL/ELT pipelines from an infrastructure perspective (Airflow, Kubernetes, ArgoCD).

- Ensure reliable data ingestion from cloud storage and streaming sources (Airflow, Kafka).

- Work closely with Data Engineering to maintain production-grade pipelines.

Cloud Infrastructure & Security

- Work with cloud platforms (AWS) for Snowflake integration.

- Manage IAM roles, access policies, and Snowflake RBAC for secure data access.

- Ensure compliance with data governance, auditing, and security standards.

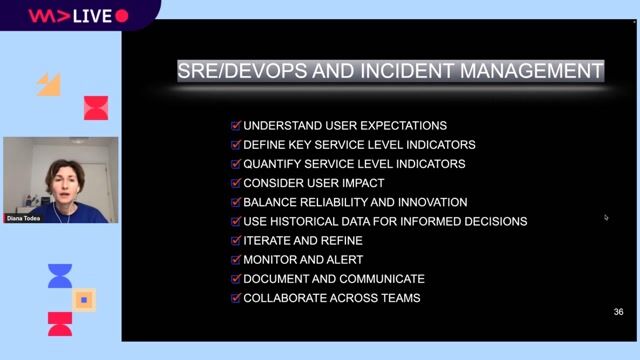

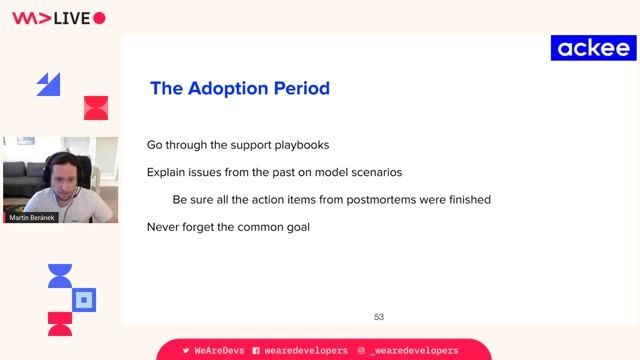

Incident & Change Management

- Participate in on-call rotations to handle data platform incidents.

- Perform root cause analysis (RCA) and implement long-term corrective actions.

- Maintain documentation, runbooks, and SOPs for Snowflake operations.

Requirements

- Typically requires a Bachelor's degree in (relevant degree) and a minimum of 5 years of related experience; or an advanced degree with 3+ years of experience; or equivalent related work experience.

- 5+ years of experience as an SRE, DevOps Engineer, or Data Platform Engineer.

- Hands-on experience with Snowflake administration, performance tuning, and security.

- Experience with SQL and understanding of database concepts.

- Proficiency in scripting (Python) for automation.

- Knowledge of Kubernetes or containerized environments.

- Experience with IaC tools (Terraform) and CI/CD pipelines.

- Familiarity with Airflow or similar orchestration tools.

- Experience working with AWS or Azure platforms.

- Hands-on experience deploying Helm charts to Kubernetes (preferably using GitOps tools like ArgoCD)

- Strong understanding of logging, monitoring, and alerting tools (Datadog, Splunk, CloudWatch, etc.).

Nice to Have

- Snowflake SnowPro certification (Core or Advanced).

- Experience with DBT for data transformations.

- Experience in cost optimization for large-scale Snowflake workloads.

This position requires fluent written and oral communication in English.

Applicants must be authorized to work for any employer in the U.S. We are unable to sponsor or take over sponsorship of an employment Visa at this time.

Benefits & conditions

- Hybrid Work Opportunities

- Flexible Time Off

- Career Development & Mentoring Programs

- Health & Wellness Benefits, including competitive health insurance offerings and generous paid parental leave for eligible new parents

- Community Volunteering & Company Philanthropy Programs

- Employee Peer Recognition Programs - "You Earned it"