Machine Learning Engineer | Python | Pytorch | Distributed Training | Optimisation | GPU | Hybrid, San Jose, CA

Enigma LLC

Campbell, United States of America

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

IntermediateJob location

Campbell, United States of America

Tech stack

Clean Code Principles

Adobe Flash

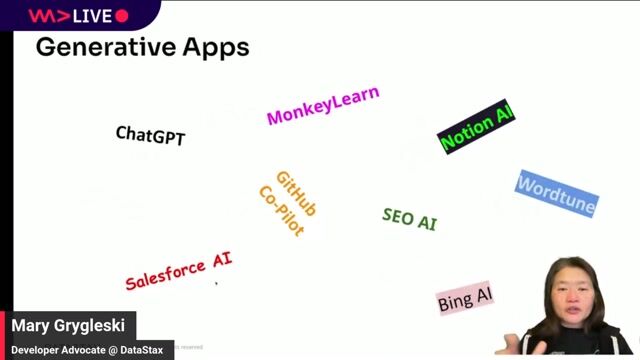

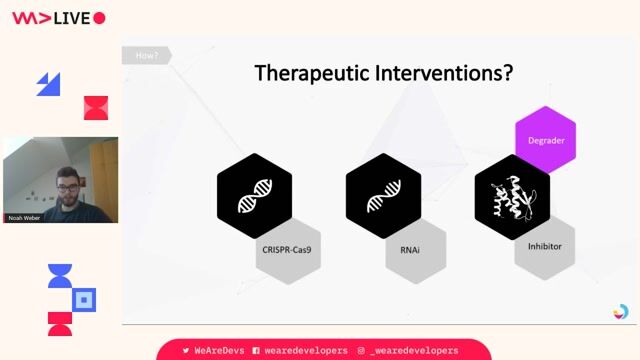

Artificial Intelligence

Computer Engineering

Continuous Integration

Distributed Computing Environment

Memory Management

Python

Machine Learning

NoSQL

TensorFlow

Azure

SQL Databases

Data Streaming

Parquet

Graphics Processing Unit (GPU)

Data Storage Management

Load Balancing

PyTorch

Autoscaling

Deep Learning

Caching

Information Technology

ONNX (Open Neural Network Exchange) Format

Machine Learning Operations

TensorRT

Data Pipelines

Job description

- Productize and optimize models from Research into reliable, performant, and cost-efficient services with clear SLOs (latency, availability, cost).

- Scale training across nodes/GPUs (DDP/FSDP/ZeRO, pipeline/tensor parallelism) and own throughput/time-to-train using profiling and optimization.

- Implement model-efficiency techniques (quantization, distillation, pruning, KV-cache, Flash Attention) for training and inference without materially degrading quality.

- Build and maintain model-serving systems (vLLM/Triton/TGI/ONNX/TensorRT/AITemplate) with batching, streaming, caching, and memory management.

- Integrate with vector/feature stores and data pipelines (FAISS/Milvus/Pinecone/pgvector; Parquet/Delta) as needed for production.

- Define and track performance and cost KPIs; run continuous improvement loops and capacity planning.

- Partner with ML Ops on CI/CD, telemetry/observability, model registries; partner with Scientists on reproducible handoffs and evaluations.

Requirements

- Bachelors in computer science, Electrical/Computer Engineering, or a related field required; Master's preferred (or equivalent industry experience).

- Strong systems/ML engineering with exposure to distributed training and inference optimization.

Industry Experience:

- 3-5 years in ML/AI engineering roles owning training and/or serving in production at scale.

- Demonstrated success delivering high-throughput, low-latency ML services with reliability and cost improvements.

- Experience collaborating across Research, Platform/Infra, Data, and Product functions.

Technical Skills:

- Familiarity with deep learning frameworks: PyTorch (primary), TensorFlow.

- Exposure to large model training techniques (DDP, FSDP, ZeRO, pipeline/tensor parallelism); distributed training experience a plus

- Optimization: experience profiling and optimizing code execution and model inference: (PTQ/QAT/AWQ/GPTQ), pruning, distillation, KV-cache optimization, Flash Attention

- Scalable serving: autoscaling, load balancing, streaming, batching, caching; collaboration with platform engineers.

- Data & storage: SQL/NoSQL, vector stores (FAISS/Milvus/Pinecone/pgvector), Parquet/Delta, object stores.

- Write performant, maintainable code

- Understanding of the full ML lifecycle: data collection, model training, deployment, inference, optimization, and evaluation.