AI and Deep Learning Architect - Model Compression and Quantization

Kneron, Inc.

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Tech stack

Algorithm Design

Computer Vision

C++

Nvidia CUDA

Computer Programming

Machine Learning

OpenCV

OpenCL

TensorFlow

Software Engineering

PyTorch

Caffe

Deep Learning

Parallel Computation

Information Technology

Job description

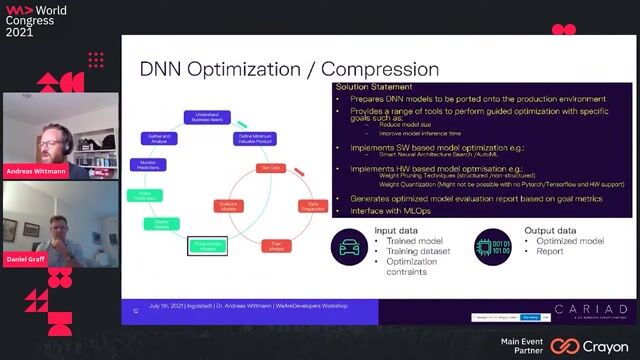

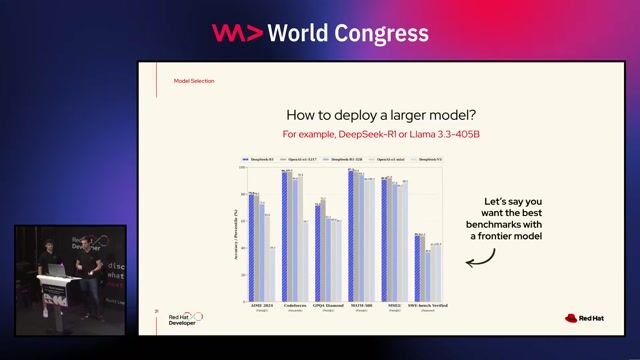

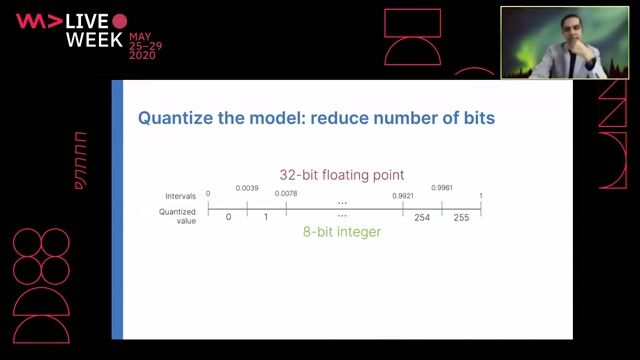

- Research and develop state-of-the-art model compression techniques including QAT, model distillation, pruning, quantization, model binarization, and others for deep learning models.

- Implementing novel deep neural network architectures and developing advanced training algorithms to support model structure training, auto pruning and low-bit quantization.

- Apply and optimize model compression and quantization technique to variety of models in computer vision applications, audio applications, and others.

- Research and optimize model compression and quantization technique for Kneron AI accelerator and jointly optimize hardware architecture for compressed model.

- Intern or full time.

Requirements

- M.S./PhD in Computer Science, Machine Learning, Mathematics or similar field (Ph.D. is preferred)

- 3+ years of industry/academia experience with deep learning algorithm development and optimization.

- 3-5 years of software engineering experience in an academic or industrial setting.

- Research experience on any model compression and model quantization technique including model distillation, pruning, post train quantization, quantization aware retrain, model binarization, and NAS.

- Experience on model accuracy loss analysis for model compression and quantization is a strong plus. Noise modeling and noise analysis are strong plus.

- Strong experience in C/C++ programing is a plus.

- Hands-on experience in computer vision and deep learning frameworks, e.g., OpenCV, Tensorflow, Keras, Pytorch, and Caffe.

- Ability to quickly adapt to new situations, learn new technologies, and collaborate and communicate effectively.

- Experience with parallel computing, GPU/CUDA, DSP, and OpenCL programming is a plus.

- Top-tier conference publication records, including but not limited to CVPR, ICCV, ECCV, NIPS, ICML, are strong plus.