Cloud DevOps Engineer

MILLENNIUM

Miami, United States of America

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

IntermediateJob location

Miami, United States of America

Tech stack

Java

Agile Methodologies

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Software Applications

Unix

Cloud Computing

Continuous Delivery

Continuous Integration

Data Integrity

Relational Databases

Linux

DevOps

Distributed Computing Environment

Django

Middleware

Hadoop

Python

Octopus Deploy

RabbitMQ

Datadog

Flask

Snowflake

Grafana

Spark

Spring-boot

Backend

Cloudformation

FastAPI

Kubernetes

Information Technology

Apache Flink

Kafka

Non-relational Database

TeamCity

Terraform

Splunk

Jenkins

Job description

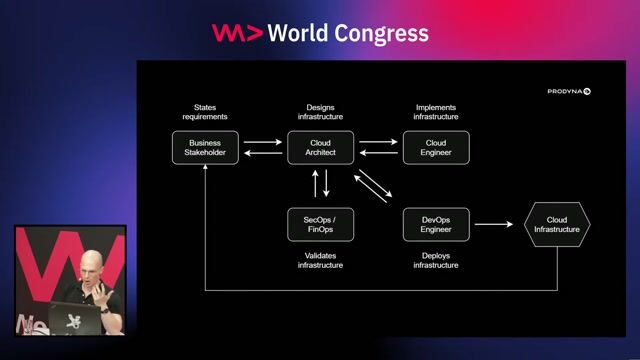

- Work closely with quants, portfolio manager, risk managers and other engineers in New York, Chicago, Miami, and Bangalore to develop data intensive and multi-asset analytics for our Commodities platform

- Gather requirements and user feedback in collaboration with fellow engineers and project leads

- Design, build and refactor robust software applications with clean and concise code following Agile and continuous delivery practices

- Automation of system maintenance tasks, end-of-day processing jobs, data integrity checks and bulk data loads/extracts

- Staying abreast of industry trends, new platforms and tools, and coming up with a business case to adopt new technologies

- Develop new tools and infrastructure using Python (Flask/Fast API) or Java (Spring Boot) and relational data backend (AWS - Aurora/Redshift/Athena/S3)

- Support users and operational flows for quantitative risk, senior management and portfolio management teams using the tools developed

Requirements

- Advance degree in computer science or any other scientific fields

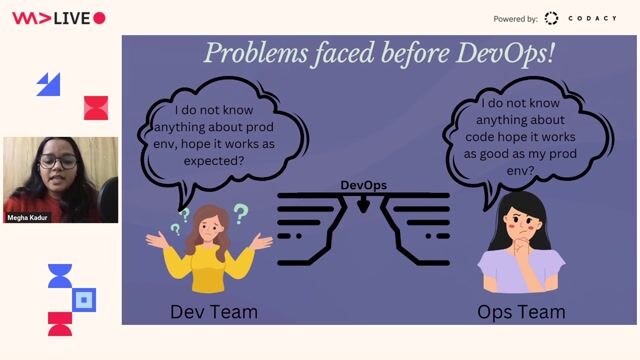

- 3+ years of experience in CI/CD Tools like TeamCity, Jenkins, Octopus Deploy and ArgoCD.

- AWS Cloud infrastructure design, implementation and support. Experience with multiple AWS Services.

- Infrastructure as Code deploying cloud infrastructure using Terraform or Cloudformation.

- Knowledge of Python (Flask/FastAPI/Django)

- Demonstrated expertise in the process of containerization for applications and their subsequent orchestration within Kubernetes environments.

- Experience working on at least one monitoring/observability stack (Datadog, ELK, Splunk, Loki, Grafana).

- Strong knowledge of Unix or Linux

- Strong communication skills to collaborate with various stakeholders

- Able to work independently in a fast-paced environment

- Detail oriented, organized, demonstrating thoroughness and strong ownership of work

- Experience working in a production environment.

- Some experience with relational and non-relational databases.

Qualifications/Nice to have

- Experience with a messaging middleware platform like Solace, Kafka or RabbitMQ.

- Experience with Snowflake and distributed processing technologies (e.g., Hadoop, Flink, Spark)