Databricks Engineer (PySpark and Data Lake)

Role details

Job location

Tech stack

Job description

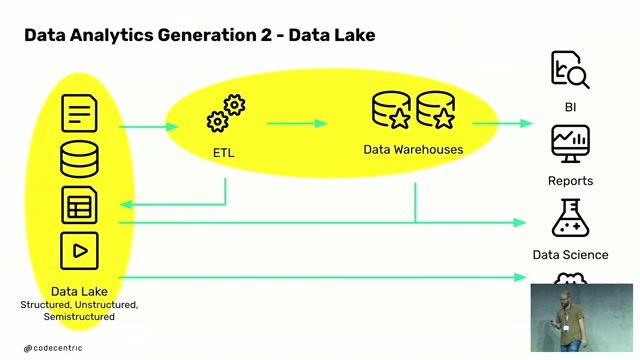

We are actively hiring experienced Senior Databricks Engineers to support a large-scale data modernization initiative. This role focuses on migrating legacy ETL workflows from Ab Initio to cloud-native Databricks pipelines using Apache Spark (PySpark)., Analyze and migrate legacy ETL workflows from Ab Initio to PySpark Design, develop, and optimize scalable data pipelines on Databricks Build and maintain ETL/ELT pipelines integrating data from Snowflake and other enterprise sources Support batch and near real-time data processing Create data lineage, data flow diagrams, and optimize data processes Develop unit, integration, and reconciliation testing frameworks Support deployment, migration strategy, and production cutovers Work with scheduling tools like Control-M Collaborate with architects, analysts, and business stakeholders for UAT/FAT sign-offs

Requirements

8+ years of Data Engineering experience Strong hands-on experience with PySpark and Databricks Experience with Ab Initio to PySpark migration Expertise in ETL/ELT pipeline development Strong knowledge of Data Lakes, Data Warehousing, and Snowflake Experience in Data Lineage, Data Governance, and Data Quality Strong coding skills in Python Experience with batch and real-time processing frameworks Preferred:

Experience in Banking/Financial Services domain Experience with cloud platforms like Amazon Web Services / Microsoft Azure Strong troubleshooting and production support experience