Backend Engineer (APIs)

Role details

Job location

Tech stack

Job description

You'll be joining the APIs team, a small group of engineers responsible for the core platform that powers everything CARTO's customers build. The team works directly on top of the major cloud data warehouses - BigQuery, Snowflake, Redshift, Databricks, and Oracle - building the high-performance, low-latency layer that makes geospatial analysis possible at scale without ever moving data out of our customers' own systems. This is not a role where you'll be handed a spec and asked to implement it. We're looking for someone who asks hard questions early, spots architectural problems before they become production incidents, and has a point of view on how things should be built. You'll work closely with data warehouse engineering teams at Google, Snowflake, and Databricks, and contribute to open-source geospatial communities that the wider industry depends on. If you're energized by working at scale - billions of API calls, terabytes of geospatial data, customers who depend on the platform being fast and reliable - and you want to do it alongside a team that takes engineering craft seriously, we'd love to hear from you. Location

This is a remote-first role, open to candidates based anywhere in Spain. We have offices in Madrid and Seville if you prefer to work in person or want a place to collaborate occasionally, but there's no expectation to use them.

You will

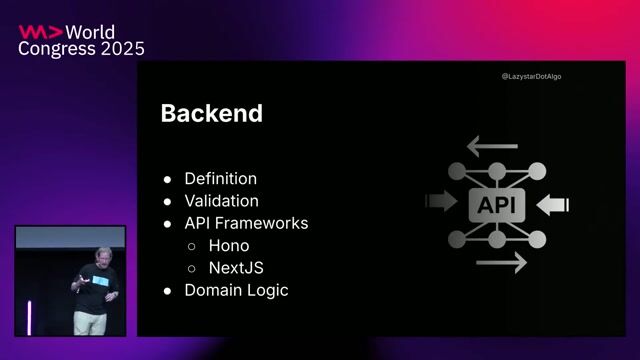

- Own the design and implementation of high-performance API endpoints that serve billions of requests per month, from initial architecture decisions through production monitoring and iteration.

- Push the boundaries of what's possible on top of BigQuery, Snowflake, Redshift, Databricks, and Oracle - you'll develop a deep understanding of their internals, query planners, and limits, and have direct access to the engineering teams behind them.

- Tackle hard distributed computing problems: query performance at massive scale, multi-tenant data isolation, low-latency geospatial operations on datasets measured in terabytes.

- Shape the technical direction of the APIs team - participating in architecture decisions, setting standards for code quality and testing, and raising the bar through code reviews and mentorship.

- Work at the intersection of open-source geospatial communities (PostGIS, GeoParquet, GDAL, Deck.gl) and the modern cloud data warehouse ecosystem, contributing to and learning from engineers at Uber, Google, Databricks, and Snowflake.

- Integrate AI tools pragmatically throughout your development workflow - not as a checkbox, but as a genuine productivity multiplier in how you design, build, and test.

Requirements

Do you have experience in SQL?, We're looking for a senior backend engineer who thrives on hard infrastructure problems and has the experience to own them independently - from design through production., * 5+ years of backend engineering experience, with a track record of owning complex systems end-to-end - not just implementing specs but shaping how they're designed and evolve over time.

- Deep SQL fluency. You write and optimize non-trivial queries directly, understand execution plans, and know when the database is your best tool and when it isn't.

- Experience building and operating high-throughput APIs in production - you've thought hard about latency, reliability, and scaling bottlenecks, and you have scars to prove it.

- Comfort in a serverless and cloud-native environment, with practical experience in Docker, CI/CD pipelines, and cloud infrastructure (GCP or AWS).

- Strong opinions about software quality - you write tests not because you're told to but because you've seen what happens when you don't. You document what matters and keep it current.

- A collaborative, low-ego approach to engineering. You give direct feedback in code reviews, ask good questions when you don't know something, and make the people around you better.

Nice to have

- Experience with TypeScript and Node.js in a production backend context.

- Familiarity with geospatial technologies such as PostGIS, H3, or S2.

- Hands-on experience with one or more of our primary data warehouses: BigQuery, Snowflake, Redshift, Databricks, or Oracle.

- Previous work with CI/CD pipelines, Infrastructure as Code, or production monitoring and observability.

- Experience with Google Cloud Platform or AWS at an infrastructure level, beyond just deploying applications.

Benefits & conditions

- Compensation based on experience, discussed transparently during the process, plus an annual bonus of up to 10% based on company objectives

- Contribute to a platform used by top companies around the world. Your work will have a direct impact on our users and clients

- Access to our Employee Stock Options Plan

- Private Medical Insurance

- Flexible compensation

- Education stipend

- Remote work stipend

- English classes

About CARTO We specialize in delivering innovative solutions and exceptional services to meet the diverse needs of our clients. With a strong commitment to quality and customer satisfaction, we strive to exceed expectations and drive success in every project we undertake.