DevOps / AI Infrastructure Engineer - GPU & Kubernetes

Role details

Job location

Tech stack

Job description

Senior DevOps / AI Infrastructure Engineer to design, build and operate GPU-accelerated AI/ML infrastructure. You will enable high-performance training and inference workflows by managing cloud/GPU platforms, Kubernetes clusters, IaC, and AI tooling (Triton, Kubeflow, MLflow). The role combines deep platform engineering with automation and close collaboration with ML engineers and R&D teams., * Design, deploy and operate GPU-enabled Kubernetes clusters and associated platform services for training and inference.

- Build and maintain CI/CD, model CI and MLOps pipelines using tools such as Kubeflow, MLflow and Triton.

- Implement and manage cloud infrastructure on Azure (and other clouds as needed), with GPU instances and storage for large datasets.

- Automate provisioning and configuration using Terraform, Ansible and scripting (Python, Bash).

- Optimize container orchestration, scheduling and GPU utilization for high-performance workloads.

- Integrate AI inference platforms (NVIDIA Triton) and support model serving at scale.

- Work with PLM/simulation and data teams to integrate model training and inference into engineering workflows.

- Monitor, troubleshoot and tune platform performance, reliability and cost.

- Define and enforce best practices for security, resource governance and data handling in AI pipelines.

- Document architectures, runbooks and operational procedures; transfer knowledge to engineering teams.

Requirements

Start: ASAP (or as agreed) Duration: 6 months (with possibility to extend) Experience: 8-10 years (including at least 1.5 years in DevOps/cloud/SRE focused on AI/ML) Language: English (fluent), * 8-10 years industry experience; minimum 1.5 years in DevOps, cloud engineering or SRE with AI/ML focus.

- Hands-on experience with major cloud providers (Azure preferred; experience with GCP/AWS is valuable).

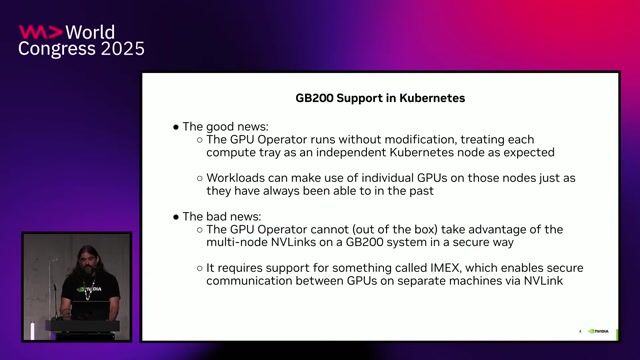

- Experience with GPU-accelerated environments and NVIDIA ecosystem.

- Deep understanding of Kubernetes and container orchestration for high-performance computing and model serving.

- Experience with AI platform tools: NVIDIA Triton, Kubeflow, MLflow (setup, pipelines, serving).

- Strong scripting and programming skills (Python, Bash) for automation and data processing.

- Proficiency with Infrastructure-as-Code: Terraform and configuration management with Ansible.

- Solid knowledge of storage, networking and security considerations for large ML workloads.

- Good communication and collaboration skills; able to work with ML researchers and engineering teams.

Preferred skills

- Experience integrating AI/ML workflows with PLM or simulation platforms.

- Familiarity with GPU scheduling solutions (NVIDIA GPU Operator, device plugins, Volcano, etc.).

- Knowledge of monitoring/observability for ML platforms (Prometheus, Grafana, ELK, metrics for GPU workloads).

- Experience with cost-optimisation and autoscaling strategies for GPU clusters.

- Familiarity with model optimization techniques (quantization, batching, mixed precision) and inference performance tuning.

Benefits & conditions

What we offer

- Work on cutting-edge AI infrastructure supporting R&D and engineering use cases.

- Opportunity to shape GPU and MLOps practices in a collaborative technical environment.

- Competitive compensation and Eindhoven-based role with flexible working arrangements.