{"@context":"https://schema.org","@type":"JobPosting","title":"Data Engineer - Dataiku Cloud H/F

Dataiku

4 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

IntermediateJob location

Tech stack

API

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Data analysis

Azure

Google BigQuery

Business Systems

Software as a Service

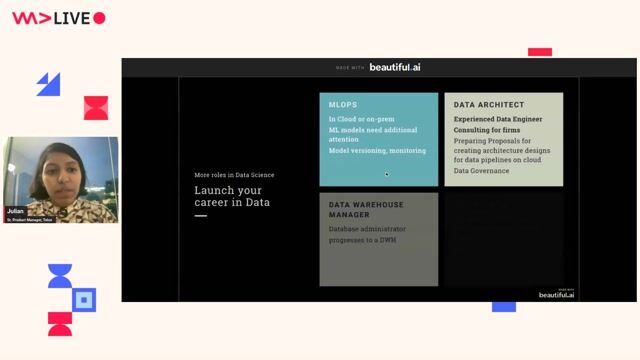

Data Governance

Data Systems

Python

Reliability Engineering

SQL Databases

Scripting (Bash/Python/Go/Ruby)

Google Cloud Platform

Snowflake

Kubernetes

Information Technology

Data Management

Dataiku

Data Pipelines

Databricks

Job description

Dataiku is looking for a Data Engineer to help build reliable data pipelines for our Operations and SRE teams supporting our Saas offering. You will collaborate closely with our Site Reliability Engineering (SRE) and Product Operations teams to build data pipelines, custom applications, integrations, and automations that eliminate manual toil and streamline our infrastructure and data management. You will be working extensively on Dataiku to accelerate our operations and analytics by building solutions, pipelines and agents.

Missions:

- Build pipelines to load data from various systems into Dataiku via S3 or Snowflake (AWS, K8s, kube, prometheus...)

- Increase the robustness of existing production pipelines, identify bottlenecks, and set up a robust monitoring, testing processes, and documentation templates

- In collaboration with the Enterprise Data and Analytics and Business Systems teams, increase the reliability and scale of our product usage data. You will extract data from our core product and ensure accurate, real-time ingestion into downstream tools and perform ad-hoc analyses.

- Build custom applications and integrations to automate manual tasks related to customer operations to help Product Operations / Support / SRE in their day-to-day activities

- Contribute to the health of our data systems by designing and documenting good practices for our data usage

Requirements

Bachelor's degree in Computer Science or related field.

- 3+ years of experience in a similar role.

- Basic scripting or development experience involving Python and SQL.

- Experience with one or more of the following: Dataiku, Snowflake, Databricks, BigQuery...

- Knowledge of Public Cloud eco-system (AWS, Azure, GCP, ...)

- Experience orchestrating pipelines and implementing data governance practices.

- Experience leveraging APIs.

- Curiosity and desire to learn new skills and contribute to the team's success across multiple fields.

- Clear communication skills and ability to work with teammates from various backgrounds.

- Strong problem-solving skills and creativity in designing robust and easy-to-use solutions.

About the company

is the Platform for AI Success, the enterprise orchestration layer for building, deploying, and governing AI. In a single environment, teams design and operate analytics, machine learning, and AI agents with the transparency, collaboration, and control enterprises require. Sitting above data platforms, cloud infrastructure, and AI services, Dataiku connects the full enterprise AI stack - empowering organizations to run AI across multi-vendor environments with centralized governance.

The world's leading companies rely on Dataiku to operationalize AI and run it as a true business performance engine delivering measurable value. For more, visit the Dataiku , , , and .