Data Architect (GCP, Snowflake)

Consolidated Storage Companies, Inc.

31 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

Intermediate Compensation

$ 38KJob location

Remote

Tech stack

Architectural Patterns

Big Data

BigTable

Google BigQuery

Cloud Computing

Cloud Database

Data Architecture

Information Engineering

Data Governance

Data Infrastructure

Data Integration

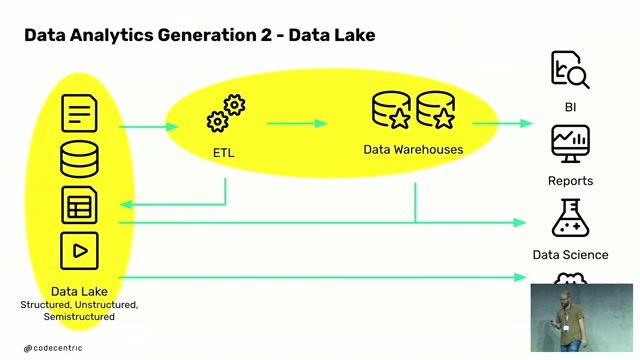

ETL

Data Structures

Data Systems

Data Vault Modeling

Database Design

Python

Performance Tuning

Ansible

SQL Databases

Unstructured Data

Snowflake

Data Strategy

Data Management

Physical Data Models

Terraform

Legacy Systems

Databricks

Job description

- Work in a supportive team of passionate enthusiasts of AI & Big Data.

- Engage with top-tier global enterprises and cutting-edge startups on international projects.

- Enjoy flexible work arrangements, allowing you to work remotely or from modern offices and coworking spaces.

- Accelerate your professional growth through career paths, knowledge-sharing initiatives, language classes, and sponsored training or conferences, including a partnership with Databricks, which offers industry-leading training materials and certifications.

- Choose your preferred form of cooperation: B2B or a contract of mandate, and make use of 20 fully paid days off.

- Participate in team-building events and utilize the integration budget.

- Celebrate work anniversaries, birthdays, and milestones.

- Access medical and sports packages, eye care, and well-being support services, including psychotherapy and coaching.

- Get full work equipment for optimal productivity, including a laptop and other necessary devices.

- With our backing, you can boost your personal brand by speaking at conferences, writing for our blog, or participating in meetups.

- Experience a smooth onboarding with a dedicated buddy, and start your journey in our friendly, supportive, and autonomous culture.

In this position, you will:

- Lead data architecture and engineering projects within GCP and Snowflake environments, including migration, transformation, and platform design.

- Design end-to-end data architectures (ingestion, storage, processing, and serving layers).

- Define and guide the implementation of data integration, ETL/ELT strategies, and pipeline architectures.

- Develop logical and physical data models, schemas, and data structures across business domains.

- Guide clients on data strategy, architecture decisions, and best practices across GCP.

- Design and enforce data governance, security, and access control frameworks.

- Work closely with security teams to ensure data protection and compliance.

- Lead client workshops to identify data sources, flows, and requirements.

- Define future-state architectures, roadmaps, and implementation plans.

- Collaborate with cross-functional teams (data scientists, engineers, business stakeholders) to deliver data solutions.

- Evaluate and select tools, frameworks, and architectural patterns.

- Provide technical leadership and mentorship to engineering teams.

- Oversee performance optimization, scalability, and cost efficiency of data platforms.

Requirements

- 7+ years of experience in Data Engineering, Data Architecture, or Data Infrastructure roles.

- 3+ years of experience working with GCP or similar cloud platforms (AWS, Azure).

- Proven experience in designing data architectures and large-scale data platforms.

- Strong experience with GCP managed services (BigQuery, Cloud SQL, Cloud Spanner, Cloud Bigtable).

- Hands-on experience with Snowflake, including data modeling and performance optimization.

- Strong expertise in data modeling, database design, and data architecture patterns.

- Experience with ETL/ELT design, data integration, and migration from legacy systems.

- Experience working with structured, semi-structured, and unstructured data.

- Understanding of data governance, security, and compliance frameworks.

- Experience with Infrastructure as Code (Terraform, Ansible, or similar).

- Proficiency in SQL and good understanding of Python.

- Strong analytical, problem-solving, and communication skills.

- Experience working in a client-facing or consulting environment.

Nice to have:

- Experience with data platform migrations to Snowflake or cloud environments.

- Familiarity with data governance program implementation.

- Knowledge of Kimball, Data Vault, or other data modeling methodologies.

- Experience with multi-cloud or hybrid architectures.

- Familiarity with data observability and monitoring tools.

- Understanding of FinOps and cloud cost optimization.

Benefits & conditions

Remote work possibility Challenging projects Autonomy at work Real impact on the company Knowledge sharing & trainings Team-building events High standard equipment Medical package Multisport MyBenefit Cafeteria Language classes Referral program EyeCare Paid time off

About the company

Addepto is a leading AI consulting (https://addepto.com/ai-consulting/) and data engineering (https://addepto.com/data-engineering-services/) company that builds scalable, ROI-focused AI solutions for some of the world's largest enterprises and pioneering startups, including Rolls Royce, Continental, Porsche, ABB, and WGU. With an exclusive focus on Artificial Intelligence and Big Data, Addepto helps organizations unlock the full potential of their data through systems designed for measurable business impact and long-term growth.

The company's work extends beyond client engagements. Drawing from real-world challenges and insights, Addepto has developed its own product - ContextClue - and actively contributes open-source solutions to the AI community. This commitment to transforming practical experience into scalable innovation has earned Addepto recognition by Forbes as one of the top 10 AI consulting companies worldwide.

As part of KMS Technology, a US-based global technology group, Addepto combines deep AI specialization with enterprise-scale delivery capabilities-enabling the partnership to move clients from AI experimentation to production impact, securely and at scale.