Senior System Architect, GPU

Role details

Job location

Tech stack

Job description

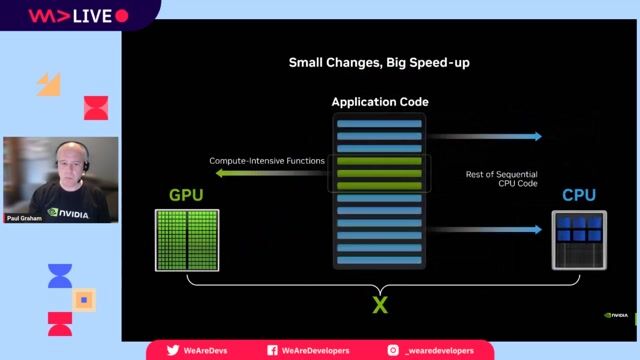

A key part of NVIDIA's strength is to innovate and deliver the highest performance in the world for AI and accelerated computing. We are constantly looking for ways to improve our GPU architecture and maintain our leadership. NVIDIA is seeking a motivated system architect to define future aspects of our GPU through employing pioneering technologies. Your role will be cross-disciplinary, working with software, ASIC design, verification, physical design, VLSI and platform teams. Our system architects excel at pushing the state of the art while making the best engineering trade-offs.

What you will be doing:

-

Develop GPU architecture innovations and improvements, optimizing along the axes of scalability/modularity, performance and power efficiency, area, yield, effort, and schedule.

-

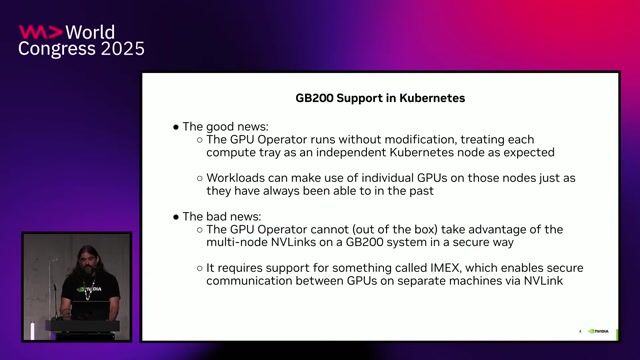

Benchmark GPU configurations (core count, memory and interconnect bandwidth) employing advanced packaging; identify optimal designs for future data center workloads.

-

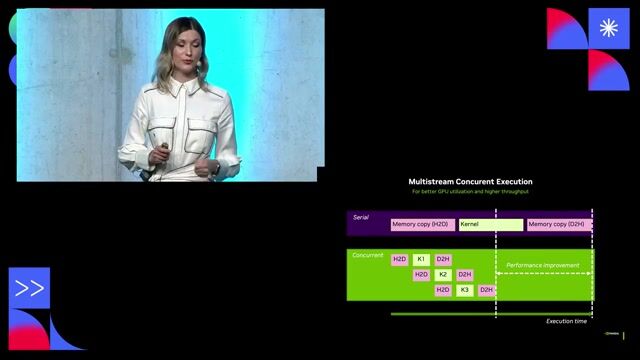

Develop and enhance performance analysis infrastructure, including performance simulators, testbench components and analysis tools, to evaluate configurations under different constraints.

-

Implement and maintain high-level functional and performance models. Analyze application workloads and performance simulation results to identify areas of architecture improvements.

-

Document architecture specifications; work with ASIC design, software, and VLSI teams to review and explore trade-offs, define solutions, and track progress.

-

Collaborate with other functional teams (Design, Floorplan, Packaging and Systems Engineering, etc) to validate packaging choices against performance, cost, and scalability targets.

Requirements

-

Master's/PhD in Computer Engineering, Computer Science or related fields (or equivalent experience)

-

A minimum of 8 years of relevant work experience in GPU or CPU System Architecture development

-

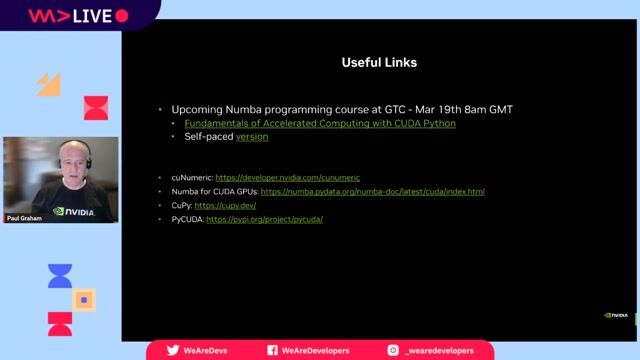

Proficiency in data analysis (Python, Excel) to correlate configuration changes with performance metrics.

-

Deep understanding of accelerated computing and AI data center requirements and tradeoffs, including performance bottlenecks, TCO, Power Delivery Network (PDN), DC Networking, etc

-

Strong communication and interpersonal skills, as well as the ability to thrive in a dynamic, collaborative, distributed team.

Ways to stand out from the crowd:

-

Experience with GPU architecture, especially in off-chip IO, memory subsystem, and/or Network-on-Chip (NoC)/Interconnect. Knowledgeable in system level functions such as reset and boot, DFT, and power management

-

Expertise in analyzing performance scaling and bottlenecks at device and system levels for AI/accelerated computing workloads

-

Knowledgeable in modern packaging technologies, and their costs and benefits

-

Consistent track record of efficiently implementing complex architectural features

-

Outstanding problem-solving skills with a focus on optimizing performance, area, complexity, and power.

Benefits & conditions

Your base salary will be determined based on your location, experience, and the pay of employees in similar positions. The base salary range is 184,000 USD - 287,500 USD.