TELECOMMUTE Data Architect (Snowflake and DBT)

Role details

Job location

Tech stack

Job description

We are seeking a highly skilled Data Architect to lead the design, development, and optimization of our retail data ecosystem. This role will be instrumental in shaping our data strategy, enabling advanced analytics, and supporting business intelligence across finance, merchandising, supply chain, marketing, and customer experience domains.

The ideal candidate will have deep expertise in cloud data platforms (Snowflake), data transformation tools (DBT), and business intelligence platforms like MicroStrategy, Power BI with a strong understanding of medallion architecture principles to support scalable and trusted data delivery., * Architect and implement scalable, secure, and high-performance data solutions using Snowflake and DBT.

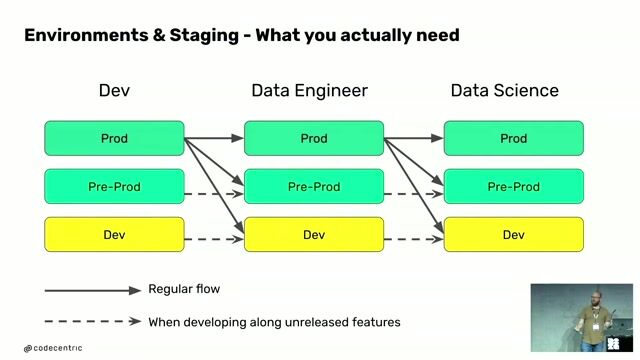

- Design and maintain a medallion architecture (Bronze, Silver, Gold layers) to support structured data ingestion, transformation, and consumption across the organization.

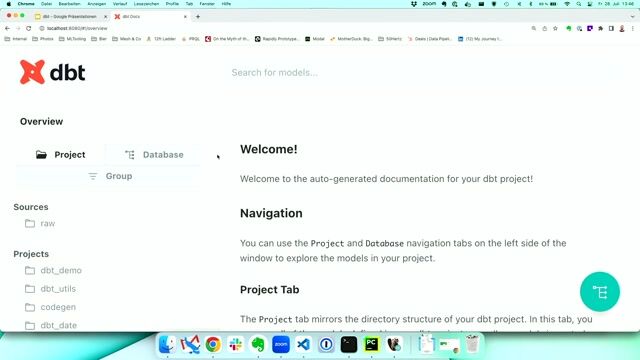

- Develop modular and reusable DBT models aligned with medallion layer principles to ensure data quality, lineage, and business logic consistency.

- Collaborate with business stakeholders to understand data requirements and translate them into technical solutions.

- Establish data governance practices including data quality, lineage, and metadata management.

- Optimize data pipelines for performance and cost-efficiency in a cloud-native environment.

- Direct experience with dimensional modeling (Star/Snowflake).

- Lead data integration efforts across internal systems (ERP, POS, CRM) and external sources (vendors, third-party APIs).

- Support and enhance reporting and analytics capabilities ensuring alignment with data architecture and semantic layer design.

- Ensure metric consistency across BI tools (MicroStrategy, Power BI).

- Mentor data engineers and analysts on best practices in data modeling, transformation, and documentation.

- Architect data solutions supporting core retail use cases including inventory optimization, sales performance, promotions, pricing, and omnichannel customer analytics.

- Stay current with emerging technologies and trends in data architecture and retail analytics.

Requirements

- Bachelor s or Master s degree in Computer Science, Information Systems, or related field.

- 5+ years of experience in data architecture or data engineering roles.

- Proven experience with Snowflake, DBT, and SQL.

- Strong understanding of ETL/ELT processes, data warehousing, and cloud data platforms.

- Experience designing and implementing medallion architecture in a modern data stack.

- Strong background in documenting historical processes and designing future workflow documentation.

- Hands-on experience with MicroStrategy, including schema design, dashboard development, and performance tuning.

- Familiarity with CI/CD pipelines, version control (Git), and agile development practices.

- Excellent communication and stakeholder management skills.

Preferred Qualifications

- Experience with tools like Talend, Fivetran, Airflow, Looker, or Power BI.

- Knowledge of Python or dbt macros for advanced transformations.

- Advanced knowledge of Snowflake Data Engineering & Optimization.

- Understanding of data privacy regulations (e.g., GDPR, CCPA) and compliance in retail.

- Experience with real-time data streaming and event-driven architectures.