Principal Data Architect - Modern Data Platforms & Data Mesh

Role details

Job location

Tech stack

Requirements

10+ years of professional software and data engineering experience, with a significant portion in a technical leadership or architectural role.

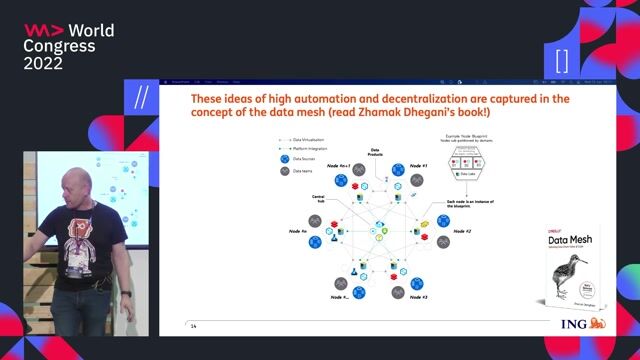

-Proven track record of transforming monolithic data warehouses into decentralized Data Mesh architectures, including experience with data contracts and federated governance.

-Deep, hands-on expertise with modern data platforms such as Databricks and Snowflake, including optimizing compute clusters and managing costs.

-Strong proficiency in distributed computing frameworks (Apache Spark) and open standards (Delta Lake, Iceberg, Hudi).

-Extensive experience implementing data privacy regulations (GDPR, CCPA) via technical controls like data masking, tokenization, and encryption at rest/in transit.

-Expert-level proficiency in Python, SQL, and Scala/Java. -You treat data pipelines as code, strictly adhering to CI/CD, unit testing, and version control best practices.

Deep understanding of event-driven architectures (Kafka, Kinesis) and workflow orchestration tools (Airflow, dbt, Dagster).

-Hands-on experience implementing data discovery and governance tools such as Unity Catalog, Alation, or Collibra.

-Strong understanding of the end-to-end machine learning lifecycle, including model registry, feature stores, and model serving