Senior Software Engineer, AI Frameworks

Role details

Job location

Tech stack

Job description

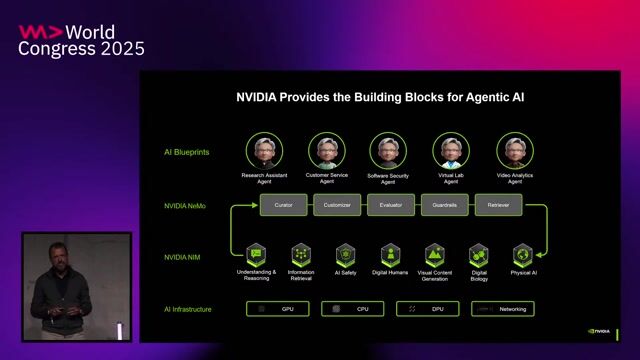

We are seeking a Senior Software Engineer to drive integration of the NVIDIA Grove project within Dynamo and across a set of leading open-source AI frameworks. In this role, you will develop production-grade software enabling Grove capabilities to be adopted, scaled, and operated smoothly. In this role, you will build production-grade software that enables seamless adoption, scaling, and operation of Grove capabilities across environments such as Dynamo, llm-d, Ray, PyTorch, and other emerging frameworks in the AI ecosystem. You will collaborate across engineering teams and the open-source community to deliver robust integrations, reference implementations, and developer-focused tooling. What you'll be doing:

- Design and implement end-to-end integrations of Grove with open-source AI frameworks (e.g., Dynamo, llm-d, Ray, PyTorch, and related ecosystem projects).

- Build and maintain adapters, plugins, operators, and/or runtime components that enable Grove features to work smoothly across training and inference stacks.

- Partner with framework owners to upstream changes, contribute patches, and ensure long-term maintainability of integrations.

- Develop reference workflows, sample apps, and best-practice guides that accelerate adoption by users and partners.

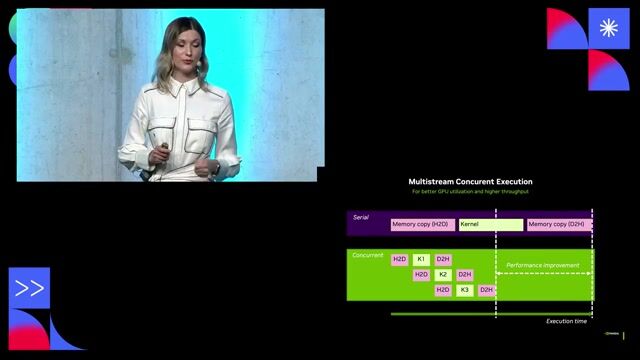

- Optimize performance, scalability, and reliability for distributed training/inference, including multi-node and multi-GPU environments.

- Improve observability and operational readiness (metrics, logging, tracing, debugging tools) for Kubernetes-based deployments.

- Participate in technical design reviews, define APIs/contracts, and ensure compatibility across versions of frameworks and dependencies.

- Diagnose complex issues spanning containers, networking, scheduling, CUDA/GPU utilization, and framework runtime behavior.

Requirements

- BS/MS/PhD in Computer Science, Electrical Engineering, or related field (or equivalent experience)

- 5+ years of proven experience in related field

- Hands-on experience integrating with at least one major AI framework/runtime (e.g., PyTorch, Ray, Triton Inference Server ecosystem, distributed runtimes, model serving stacks).

- Solid understanding of AI workloads: model development basics, training vs. inference tradeoffs, and performance considerations (throughput/latency, batching, memory).

- Experience with distributed systems concepts (RPC, scheduling, fault tolerance, resource management).

- Practical Kubernetes experience: deploying and operating services/jobs, Helm/Kustomize, operators/controllers (nice to have), and debugging clusters.

- Familiarity with containers and cloud-native tooling (Docker, container registries, CI/CD pipelines).

- Strong software engineering experience in Go, C++ and/or Python, with a track record of shipping reliable systems.

- Strong interpersonal skills and ability to collaborate across teams and with open-source communities.

- Exceptional collaboration, communication, and documentation habits.

Ways to stand out from the crowd:

- Open-source contributions to Dynamo, PyTorch, Ray, llm-d, Kubernetes ecosystem, or related ML infrastructure projects.

- Experience with large-scale model serving, distributed inference, or multi-tenant AI platforms.

- Experience building SDKs/APIs or developer tooling that improves integration usability.

- Knowledge of GPU performance profiling and optimization (Nsight tools or similar), and/or kernel-level performance tuning.

- Experience with reproducibility, packaging, versioning, and compatibility testing across fast-moving dependencies.

Benefits & conditions

NVIDIA is widely considered to be one of the technology world's most desirable employers. We have some of the most experienced and hard-working people in the world working for us. Are you creative and autonomous? Do you love a challenge? If so, we want to hear from you Your base salary will be determined based on your location, experience, and the pay of employees in similar positions. The base salary range is 152,000 USD - 241,500 USD for Level 3, and 184,000 USD - 287,500 USD for Level 4. You will also be eligible for equity and benefits . Applications for this job will be accepted at least until April 3, 2026. This posting is for an existing vacancy. NVIDIA uses AI tools in its recruiting processes. NVIDIA is committed to fostering a diverse work environment and proud to be an equal opportunity employer. As we highly value diversity in our current and future employees, we do not discriminate (including in our hiring and promotion practices) on the basis of race, religion, color, national origin, gender, gender expression, sexual orientation, age, marital status, veteran status, disability status or any other characteristic protected by law. You must create an Indeed account before continuing to the company website to apply