AWS Data Engineer With Databricks and Lakehouse

Role details

Job location

Tech stack

Requirements

-

7+ years of experience in data engineering or related roles.

-

Strong hands-on experience with the Databricks platform.

-

Proficiency in Python for data processing and pipeline development.

-

Strong experience with PySpark and distributed data processing.

-

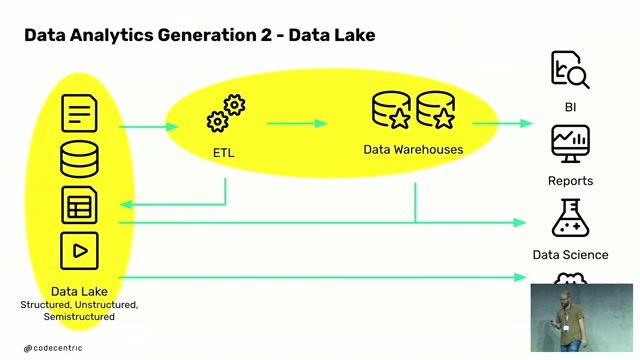

Deep understanding of ETL/ELT pipeline design and orchestration.

-

Experience with Databricks Unity Catalog and data governance practices.

-

Strong knowledge of AWS cloud services, especially:

-

S3

-

IAM

-

VPC

-

Exposure to Glue / Lambda is a plus

-

Solid understanding of data lake / lakehouse architecture patterns.

-

Experience building dashboards and supporting analytics use cases.

-

Strong SQL skills and performance tuning expertise.

-

Experience in data modeling and schema design.

-

Good problem-solving and debugging skills.

-

Strong communication and stakeholder management abilities.