Data Engineer - SQL - Pyspark - Data Modelling - Databricks - LakeHouse Architectures

NEXERA CONSULTING LLC

6 days ago

Role details

Contract type

Temporary contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Remote

Tech stack

Data analysis

Data Validation

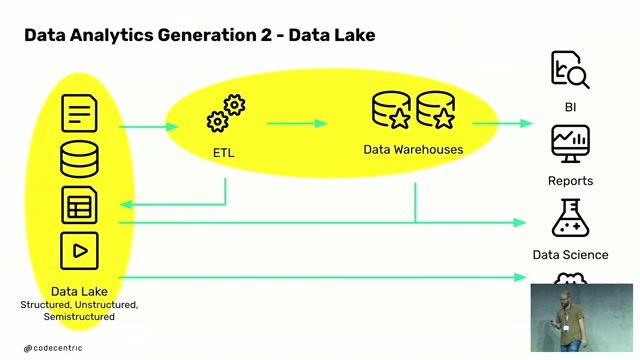

Data Infrastructure

Data Transformation

SQL Databases

PySpark

Databricks

Job description

- Create and maintain curated datasets designed for analytics, using dimensional and semantic modelling approaches

- Build and optimise transformation pipelines using SQL and PySpark within a lakehouse environment

- Work closely with Data Engineers to enhance and scale the data serving layer

- Turn business requirements into well-structured, maintainable data models

- Embed data quality checks, testing, and reliability into all stages of data transformation

- Contribute to modelling standards, documentation, and broader architectural discussions

Requirements

- Experience in an Analytics Engineering or similar data-focused role

- Strong SQL capability, including building complex transformations and data models

- Hands-on experience with PySpark and modern data platforms such as Databricks

- Good understanding of dimensional modelling and core analytics engineering principles

- Ability to clearly communicate with both technical and non-technical audiences

- Experience with Databricks or other Lakehouse technologies