Data Engineer (Snowflake + DBT)

JKV International

7 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

IntermediateJob location

Tech stack

Airflow

Amazon Web Services (AWS)

Azure

Cloud Computing

Continuous Integration

Information Engineering

ETL

Data Vault Modeling

Data Warehousing

Python

Performance Tuning

Query Optimization

Data Streaming

Data Processing

Scripting (Bash/Python/Go/Ruby)

Freeform SQL

Google Cloud Platform

Sql Optimization

Snowflake

Data Build Tool (dbt)

GIT

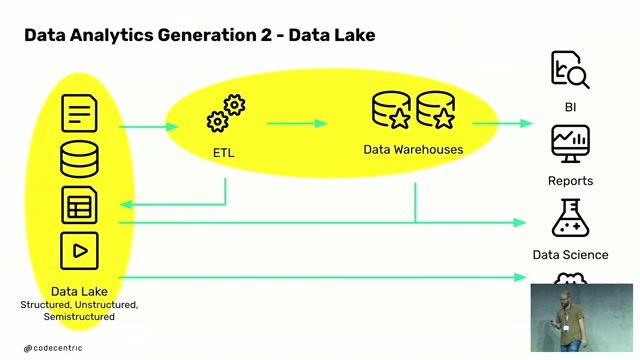

Data Lake

Star Schema

Kafka

Software Version Control

Data Pipelines

Job description

We are looking for a skilled Data Engineer with strong expertise in Snowflake and DBT to design, build, and optimize scalable data pipelines and modern data warehouse solutions. The ideal candidate should have hands-on experience in ELT frameworks, cloud platforms, and data modeling., * Design, develop, and maintain scalable data pipelines using Snowflake and DBT

- Build and optimize data warehouse solutions, including schema design and performance tuning

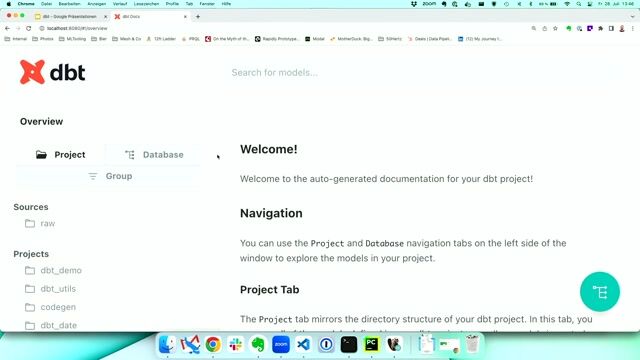

- Develop modular and reusable data models using DBT (Data Build Tool)

- Implement and manage ELT/ETL processes for ingesting data from multiple sources

- Write complex SQL queries and ensure data quality, validation, and testing

- Collaborate with business and technical teams to gather and translate data requirements

- Automate workflows using orchestration tools (Airflow or similar)

- Maintain documentation for data pipelines, models, and transformations

- Troubleshoot and optimize data pipelines for performance and scalability

Requirements

- Strong experience with Snowflake (data warehousing, performance tuning, optimization)

- Hands-on experience with DBT (dbt Core / dbt Cloud)

- Advanced SQL skills (query optimization, joins, window functions)

- Experience with data modeling (Star Schema, Snowflake Schema, Data Vault)

- Knowledge of ETL/ELT processes and data pipeline architecture

- Experience with Python or scripting for data processing

- Familiarity with cloud platforms (AWS / Azure / Google Cloud Platform)

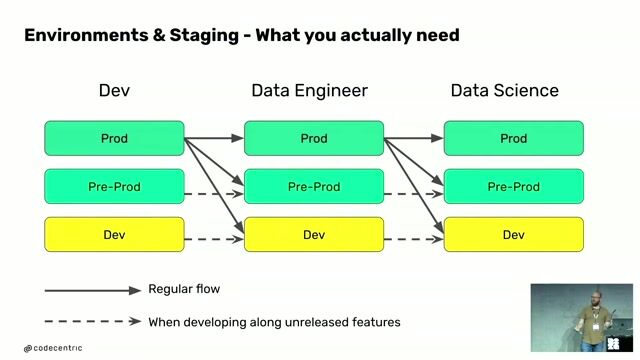

- Experience with CI/CD, Git, and version control

- Strong understanding of data quality, governance, and testing

Preferred Skills

- Experience with Airflow / orchestration tools

- Knowledge of streaming tools (Kafka, etc.)

- Exposure to data lake / lakehouse architecture

- Experience in Agile/Scrum environments

Experience

- 10+ years of Data Engineering experience

- 4+ years hands-on with Snowflake + DBT