Data Engineer

Raas Infotek LLC

Colorado City, United States of America

5 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Colorado City, United States of America

Tech stack

Java

Airflow

Amazon Web Services (AWS)

Azure

Big Data

Google BigQuery

Data Architecture

Information Engineering

Data Governance

ETL

Data Warehousing

DevOps

Distributed Systems

Hadoop

Hive

Python

Machine Learning

Software Tools

SQL Databases

Data Streaming

Workflow Management Systems

Data Processing

Google Cloud Platform

Snowflake

Spark

Containerization

Data Lake

Kubernetes

Apache Flink

Luigi

Kafka

Video Streaming

Stream Analytics

Data Pipelines

Docker

Redshift

Job description

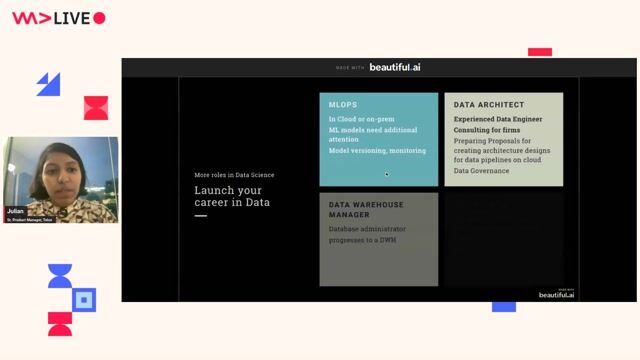

- Design, develop, and maintain scalable ETL/ELT data pipelines

- Build and optimize data architectures, data lakes, and data warehouses

- Ensure data quality, integrity, and reliability across systems

- Work with cross-functional teams including Data Scientists, Analysts, and Product teams

- Optimize performance of large-scale data processing systems

- Implement real-time and batch data processing solutions

- Develop and enforce data governance and security policies

- Mentor junior and mid-level data engineers

- Stay updated with latest data engineering tools and technologies

Requirements

We are seeking a highly experienced Senior Data Engineer to design, build, and optimize scalable data pipelines and architectures. The ideal candidate will have deep expertise in data engineering, strong problem-solving skills, and experience working with large-scale distributed systems. You will play a key role in enabling data-driven decision-making across the organization., * 12+ years of experience in Data Engineering / Big Data

- Strong expertise in SQL and Python/Scala/Java

- Hands-on experience with Big Data technologies (e.g., Hadoop, Spark, Hive)

- Experience with ETL tools and data pipeline frameworks

- Strong knowledge of data warehousing concepts (Snowflake, Redshift, BigQuery, etc.)

- Experience with cloud platforms (AWS / Azure / Google Cloud Platform)

- Proficiency in data modeling techniques

- Experience with workflow orchestration tools (Airflow, Luigi, etc.)

- Understanding of streaming technologies (Kafka, Flink, etc.)

- Strong problem-solving and analytical skills, * Experience with DevOps practices and CI/CD pipelines

- Knowledge of containerization (Docker, Kubernetes)

- Experience in real-time analytics and data streaming

- Exposure to machine learning data pipelines

- Certification in cloud platforms (AWS/Azure/Google Cloud Platform)

Soft Skills

- Strong communication and leadership skills

- Ability to work in a fast-paced, collaborative environment

- Strategic thinking and decision-making ability

- Mentoring and team leadership experience