Senior AI and ML HPC Cluster Engineer

Role details

Job location

Tech stack

Job description

NVIDIA has continuously reinvented itself over two decades. Our invention of the GPU in 1999 sparked the growth of the PC gaming market, redefined modern computer graphics, and revolutionized parallel computing. More recently, GPU deep learning ignited modern AI - the next era of computing. NVIDIA is a "learning machine" that constantly evolves by adapting to new opportunities that are hard to solve, that only we can tackle, and that matter to the world. This is our life's work, to amplify human imagination and intelligence. Make the choice to join us today!

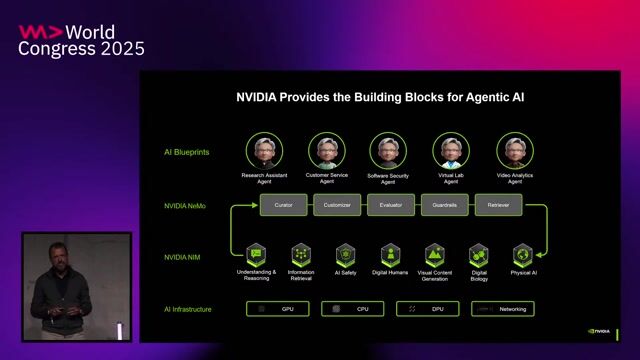

As a member of the GPU AI/HPC Infrastructure team, you will provide leadership in the design and implementation of ground breaking GPU compute clusters that run demanding deep learning, high performance computing, and computationally intensive workloads. We seek a technical leader to identify architectural changes and/or completely new approaches for our GPU Compute Clusters. As an expert, you will help us with the strategic challenges we encounter including: compute, networking, and storage design for large scale, high performance workloads, effective resource utilization in a heterogeneous compute environment, evolving our private/public cloud strategy, capacity modeling, and growth planning across our global computing environment.

What you'll be doing:

- Provide leadership and strategic guidance on the management of large-scale HPC systems including the deployment of compute, networking, and storage.

- Develop and improve our ecosystem around GPU-accelerated computing including developing scalable automation solutions

- Build and maintain AI and ML heterogeneous clusters on-premises and in the cloud

- Create and cultivate customer and cross-team relationships to reliably sustain the clusters and meet user evolving user needs

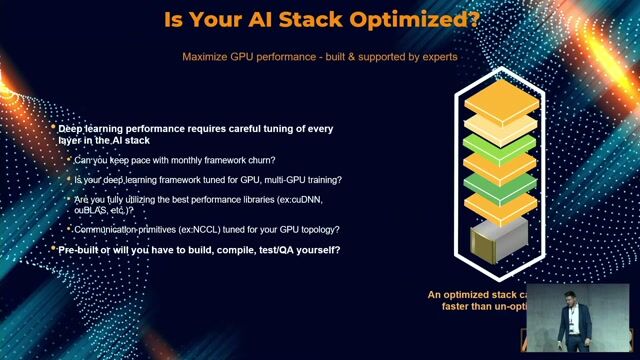

- Support our researchers to run their workloads including performance analysis and optimizations

- Conduct root cause analysis and suggest corrective action Proactively find and fix issues before they occur

Requirements

- Bachelor's degree in Computer Science, Electrical Engineering or related field or equivalent experience

- Minimum 5+ years of experience designing and operating large scale compute infrastructure

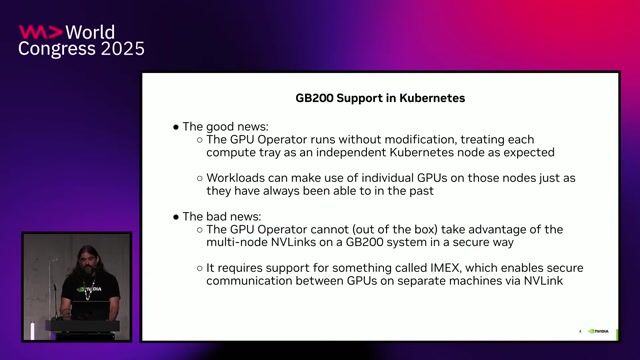

- Experience with AI/HPC advanced job schedulers, such as Slurm, K8s, PBS, RTDA or LSF

- Proficient in administering Centos/RHEL and/or Ubuntu Linux distributions

- Solid understanding of cluster configuration managements tools such as Ansible, Puppet, Salt

- In depth understating of container technologies like Docker, Singularity, Podman, Shifter, Charliecloud

- Proficiency in Python programming and bash scripting

- Applied experience with AI/HPC workflows that use MPI

- Experience analyzing and tuning performance for a variety of AI/HPC workloads.

- Passion for continual learning and staying ahead of emerging technologies and effective approaches in the HPC and AI/ML infrastructure fields.

Ways to stand out from the crowd:

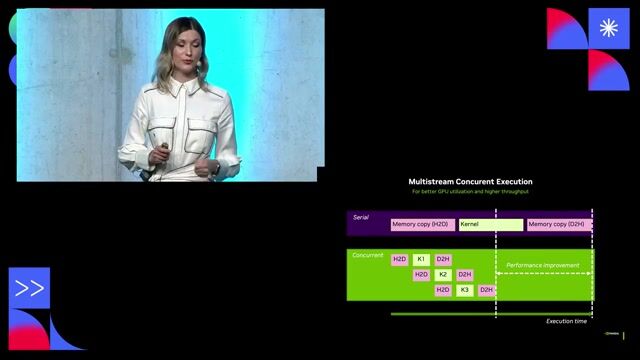

- Background with NVIDIA GPUs, CUDA Programming, NCCL and MLPerf benchmarking

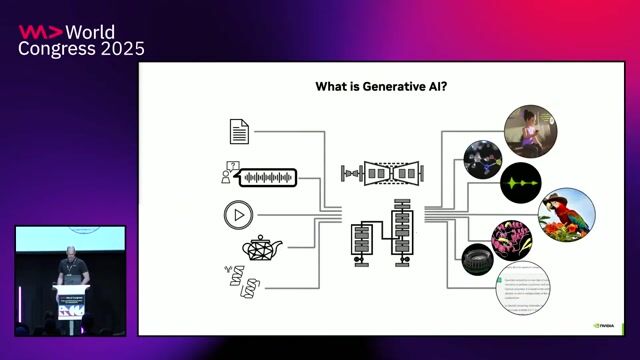

- Experience with Machine Learning and Deep Learning concepts, algorithms and models

- Familiarity with InfiniBand with IPoIB and RDMA

- Understanding of fast, distributed storage systems like Lustre and GPFS for AI/HPC workloads

- Familiarity with deep learning frameworks like PyTorch and TensorFlow

Benefits & conditions

Your base salary will be determined based on your location, experience, and the pay of employees in similar positions. The base salary range is 152,000 USD - 241,500 USD for Level 3, and 184,000 USD - 287,500 USD for Level 4.