Data Scientist

Role details

Job location

Tech stack

Job description

Design, build, and maintain scalable data pipelines and infrastructure supporting analytics, reporting, and machine learning use cases Develop and optimize ETL and ELT workflows for structured and unstructured data sources Build and maintain data integration layers across cloud, web, and on-premise systems Ensure data quality, consistency, security, and reliability across data pipelines and storage systems Develop data processing solutions using Python, SQL, and Bash scripting in Linux environments Construct and optimize complex queries across multiple data sources (e.g., PostgreSQL, MySQL, Neo4j, RDS) Develop and manage ingestion pipelines using tools such as Apache NiFi Process and transform large-scale datasets from diverse structured and unstructured sources Develop reusable, tested, and reproducible data workflows and Python-based modules Use Elasticsearch and Kibana for search, indexing, and data visualization use cases Document technical solutions, data pipelines, and methodologies for both technical and non-technical stakeholders Communicate findings through written reports, dashboards, and oral briefings to stakeholders Collaborate across multiple teams to support data-driven decision-making and analytics initiatives Support knowledge sharing by explaining complex data concepts to junior team members

Requirements

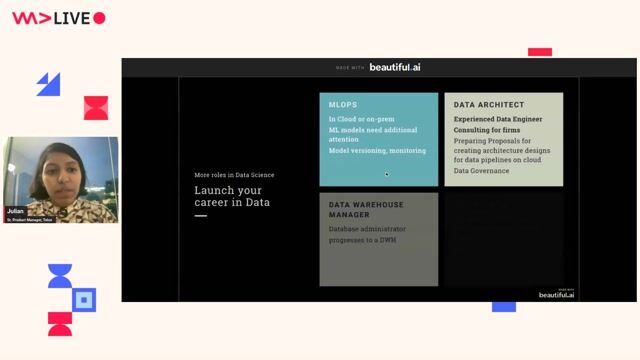

The ideal candidate has strong programming skills, experience working across distributed data systems, and the ability to translate complex technical data problems into reusable, production-ready solutions for stakeholders., Strong experience in data engineering and data pipeline development, including ETL/ELT design and implementation Proficiency in Python programming for data processing and automation Strong experience with SQL and relational database systems Experience working in Linux environments with advanced Bash scripting Experience building and managing data pipelines using Apache NiFi or similar tools Experience processing both structured and unstructured data sources Experience working with Elasticsearch and Kibana Experience using Git-based version control systems Experience using Jupyter Notebooks for analysis and prototyping Experience delivering technical results through documentation and stakeholder briefings Strong communication skills and experience working with multiple stakeholders Experience creating reusable, tested, and maintainable data solutions Academic or professional background in math, statistics, physics, computer science, data science, or related fields, Experience with cloud platforms such as AWS and cloud-based data architectures Experience with big data processing frameworks such as Apache Spark or Trino Experience applying machine learning algorithms and NLP techniques Experience with containerization technologies such as Docker or Kubernetes Experience with data visualization tools such as Tableau, Kibana, or Apache Superset Experience working with or designing machine learning workflows and models Experience creating training materials or technical curriculum in data or scientific domains Familiarity with data science MLOps or production ML workflows

Benefits & conditions

What we offer: Flexible time off Full medical coverage 401(k) with company match Referral bonuses Performance bonuses Life insurance and disability coverage Tuition and training reimbursement