Data Engineer

Role details

Job location

Tech stack

Job description

As Data Engineer, AI, you will play a key role in building and advancing LVT's data platform - contributing across the full data engineering lifecycle, from ingestion and transformation to semantic modeling and delivery - while also helping build the data infrastructure that powers LVT's AI initiatives, including RAG pipelines and Snowflake Cortex models. This is a core engineering role with an AI edge: you'll keep the data platform running with precision and reliability, and you'll be the person who makes sure our AI systems have the clean, well-structured data they need to perform.

LVT is a flexible-first company. This role can be performed remotely, with a preference for candidates who can work from our American Fork, UT office., * Design, build, and maintain scalable ELT pipelines that move data reliably from source systems into a clean, well-governed data platform.

- Develop and maintain semantic models that expose consistent, trusted business definitions across reporting and analytics surfaces.

- Own data quality - implement validation, monitoring, and alerting frameworks that catch problems before they reach stakeholders.

- Optimize Snowflake performance across ingestion, transformation, and storage, including dynamic tables, clustering, and query tuning.

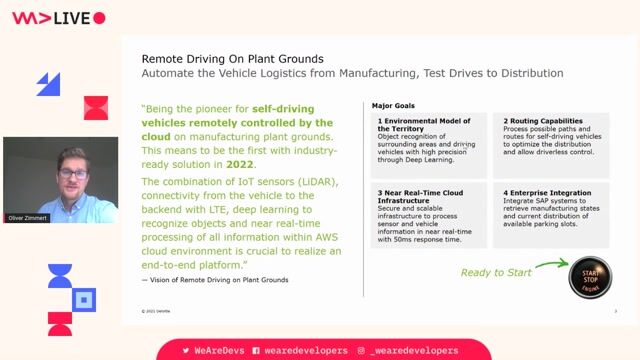

- Architect and build the data infrastructure required for RAG applications, including vector storage, chunking strategies, and retrieval pipelines.

- Collaborate with AI/ML engineers to support Snowflake Cortex model development with well-structured, context-rich training and inference data.

- Partner with analytics engineers, data scientists, and business stakeholders to translate requirements into durable data solutions.

- Document data architecture, pipeline design, and modeling decisions to support team knowledge and long-term maintainability.

Requirements

- Data Engineering Foundation: 5+ years building and maintaining production-grade pipelines using SQL, Python, and modern ELT tools such as dbt, Fivetran, or Airflow.

- Snowflake Proficiency: Deep hands-on experience with Snowflake, including performance optimization, dynamic tables, and familiarity with Cortex AI capabilities.

- Semantic Modeling Experience: Ability to design and maintain semantic or metrics layers that enforce consistent business logic across the organization.

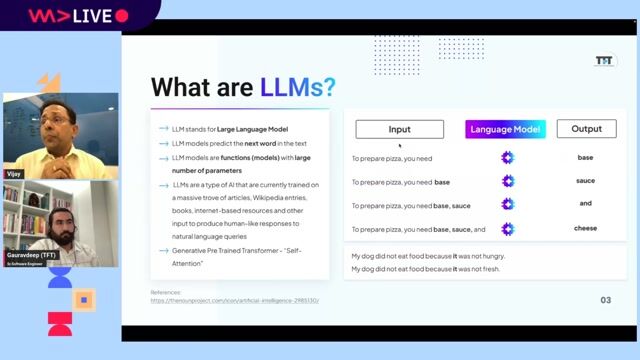

- AI Infrastructure Awareness: Working knowledge of RAG architectures and the data patterns - chunking, embedding, retrieval - that support LLM-driven applications.

- Data Quality Ownership: Strong instinct for reliability - you instrument pipelines with observability and validation from the start.

- End-to-End Mindset: Comfortable owning work from scoping through production - you define the problem, build the solution, and stand behind the outcome.

- Collaborative Communicator: Able to work fluidly with engineers, analysts, and non-technical stakeholders, translating ambiguity into clear data solutions.

Benefits & conditions

We believe you do your best work when your whole life is supported. We invest in our crew's health, families, and financial futures with a benefits package designed to support you inside and outside the office.