GenAI Data Engineer

Stott and May

Charing Cross, United Kingdom

5 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

EnglishJob location

Charing Cross, United Kingdom

Tech stack

Artificial Intelligence

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Information Engineering

ETL

Data Warehousing

Distributed Computing Environment

Distributed Systems

Amazon DynamoDB

Python

Performance Tuning

SQL Databases

Unstructured Data

Parquet

Data Processing

Large Language Models

Snowflake

Software Troubleshooting

Generative AI

Indexer

Data Lake

PySpark

Data Management

Machine Learning Operations

Data Pipelines

Redshift

Job description

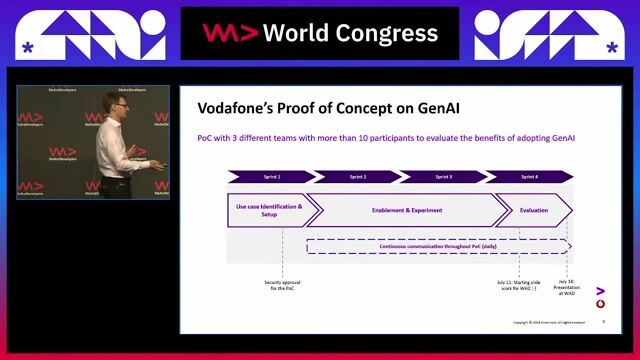

We are seeking a highly skilled GenAI Data Engineer to join a forward-thinking team delivering advanced data and AI solutions. This role will focus on designing scalable data platforms and integrating Generative AI capabilities into enterprise systems.Key Responsibilities

- Design, build and maintain scalable data pipelines using PySpark, Python and distributed computing frameworks

- Architect and optimise AWS-based data and AI infrastructure for secure, high-performance data processing

- Develop, fine-tune, benchmark and evaluate GenAI/LLM models, including custom training and inference optimisation

- Implement and maintain Retrieval-Augmented Generation (RAG) pipelines, vector databases and document processing workflows

- Build reusable frameworks for prompt management, evaluation and GenAI operations

- Collaborate with cross-functional teams to integrate GenAI solutions into production environments

- Ensure data quality, governance and operational reliability across systems

Requirements

- Strong experience with PySpark and large-scale distributed data processing (ETL/ELT pipelines)

- Advanced SQL expertise, including schema design (star/snowflake), indexing and optimisation techniques

- Proven experience implementing CDC and SCD (Type 1/2/3) in data warehousing environments

- Advanced Python skills for data engineering, automation and AI/ML integration

- Hands-on experience with AWS services (e.g. S3, Glue, Lambda, EMR, Bedrock or custom model hosting)

- Practical experience developing and evaluating GenAI/LLM models

- Strong understanding of RAG architectures, embeddings and vector databases

- Experience handling structured and unstructured data (e.g. text, documents, logs, images)

- Knowledge of scalable storage solutions such as Delta Lake, Parquet, Redshift and DynamoDB

- Experience optimising AI models (e.g. quantisation, distillation, inference tuning)

- Strong troubleshooting and performance optimisation skills across distributed systems

Desirable Skills

- Additional experience across PySpark, Python, SQL, AWS and GenAI technologies

Personal Attributes

- Strong analytical and problem-solving abilities

- Effective communication skills and ability to work collaboratively

- Experience working in agile, cross-functional environments