Principal High-Performance LLM Training Engineer

Role details

Job location

Tech stack

Job description

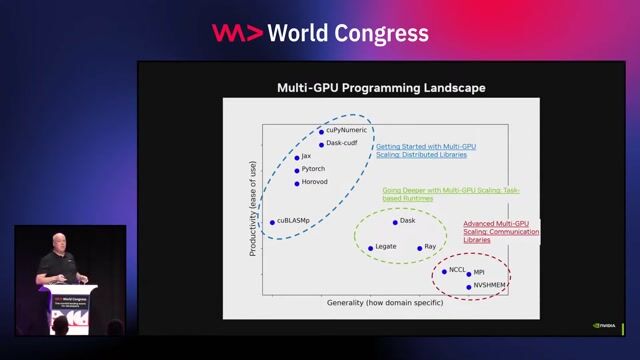

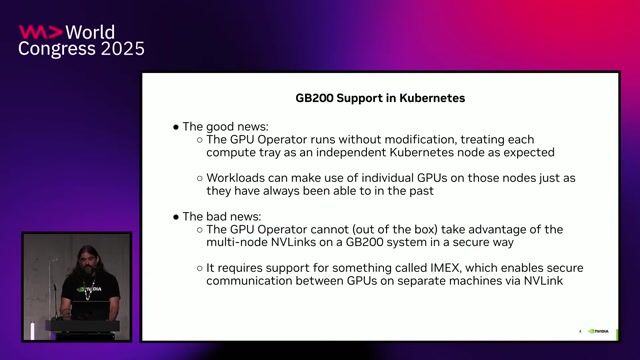

NVIDIA is seeking a Principal Engineer to drive the performance of large-scale AI training and post-training workloads across NVIDIA's full hardware and software stack. This role sits at the intersection of distributed training, GPU architecture, systems software, deep learning frameworks, and performance engineering. You will analyze and optimize frontier-scale LLM workloads running on thousands of GPUs, drive improvements across frameworks such as PyTorch, JAX, NeMo, and NeMo RL, and use insights from real workloads to help shape future NVIDIA GPU, system, and software roadmaps.

We are looking for a deeply technical leader who can operate across abstraction layers: from application-level training behavior to framework/runtime internals, CUDA libraries, communication collectives, memory systems, networking, and GPU architecture. At this level, success means both directly improving performance directly as well as setting technical direction, raising the bar for the organization, and influencing multi-functional decisions across NVIDIA.

What you will be doing:

- Lead end-to-end performance analysis and optimization of innovative LLM pre-training and post-training workloads on the latest NVIDIA hardware and software platforms.

- Drive workloads closer to speed-of-light performance by identifying and removing bottlenecks across compute, memory, communication, scheduling, parallelism strategy, kernel efficiency, framework overhead, and system-level scaling.

- Develop production-quality software, tools, models, benchmarks, and analysis infrastructure that improve training performance, efficiency, and developer velocity across NVIDIA's AI software stack.

- Build and refine performance models, workload characterizations, and simulation methodologies to guide future GPU, networking, system, and software architecture decisions.

- Serve as a technical authority for AI training performance, partnering closely with teams across GPU architecture, systems, CUDA libraries, compilers, networking, frameworks, product management, and applied AI.

- Translate workload insights into concrete hardware and software recommendations, and advocate for changes that improve performance and efficiency across the AI ecosystem.

- Mentor and provide technical leadership to engineers across the organization, helping establish best practices for large-scale AI performance analysis and optimization.

Requirements

- A MS, or PhD (or equivalent experience) in Computer Science, Electrical Engineering, Computer Engineering, or a related field, with 12+ years of relevant work or research experience.

- Demonstrated principal-level technical impact in one or more of the following areas: large-scale AI training systems, GPU performance optimization, distributed systems, high-performance computing, ML frameworks, compilers/runtimes, or hardware/software co-design.

- Deep hands-on experience analyzing and optimizing performance of large-scale deep learning workloads, especially transformer-based models, LLM pre-training, reinforcement learning, fine-tuning, or other post-training workloads.

- Strong understanding of GPU and AI accelerator architecture from individual accelerators to datacenter-scale systems.

- Experience with distributed training techniques such as data parallelism, tensor parallelism, pipeline parallelism, expert parallelism, sequence parallelism, activation checkpointing, mixed precision training, and communication/computation overlap.

- A strong track record of using profiling, tracing, benchmarking, and performance modeling tools to diagnose complex bottlenecks and drive measurable improvements.

- Excellent communication and technical leadership skills, with the ability to influence architecture and software decisions across multiple teams without relying on direct authority.

Benefits & conditions

Your base salary will be determined based on your location, experience, and the pay of employees in similar positions. The base salary range is 272,000 USD - 431,250 USD.