Junior Data Engineer

Role details

Job location

Tech stack

Job description

Do you want to help shape and accelerate the future of our clients? Join the Platform Development & Integration team within Deloitte Engineering. You will work on a wide range of projects to turn data into actionable insights, modernize data and cloud infrastructures, and help organisations become data-driven. With your commitment and eagerness to learn, you will help ensure projects are delivered on time and to a high standard. How do you do this?

You will be part of the Deloitte Engineering team and work in multidisciplinary projects for a range of clients. Some of your responsibilities will include:

- Analyzing client needs and helping to translate them into technical solutions using modern data engineering technologies including Apache Spark, Delta Lake, Hudi, PySpark, Spark SQL;

- Designing, developing, and maintaining scalable data-driven solutions;

- Building robust ETL and ELT data pipelines on public cloud and data platforms (AWS, Azure, GCP, Databricks, Snowflake, etc);

- Collaborating with senior engineers to design and improve data pipelines, architectures, and modeling for our clients;

- Participating in team activities and training sessions to build your skills and support the development of our data engineering capability.

Requirements

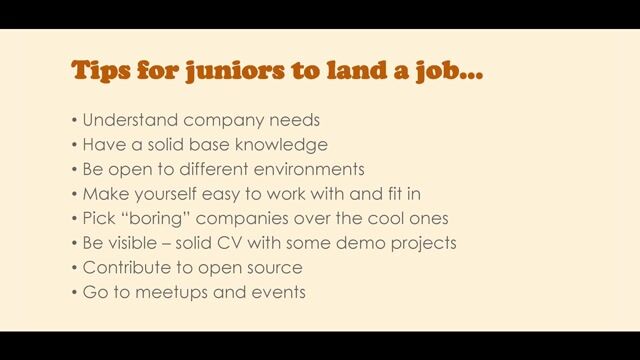

- A technical Master's degree in a related field (Data Science, Data Engineering, Artificial Intelligence, Computer Science, Software Engineering, Statistics), or a Bachelor's degree with at least two years' work experience;

- Basic knowledge of, or experience with, designing and implementing data pipelines using modern data engineering technologies and frameworks such as Apache Spark, Apache Airflow, Iceberg, Delta Lake, Hudi;

- Knowledge of at least one programming language such as Python or SQL. Experience with PySpark is an advantage.

- Familiarity with public cloud platforms such as AWS, Azure, and Google Cloud Platform (GCP);

- Strong analytical and problem-solving skills;

- An excellent command of English and Dutch. Both written and spoken.

Benefits & conditions

- in addition to a competitive salary, a share in our profits

- an overtime arrangement that allows you to receive compensation for overtime

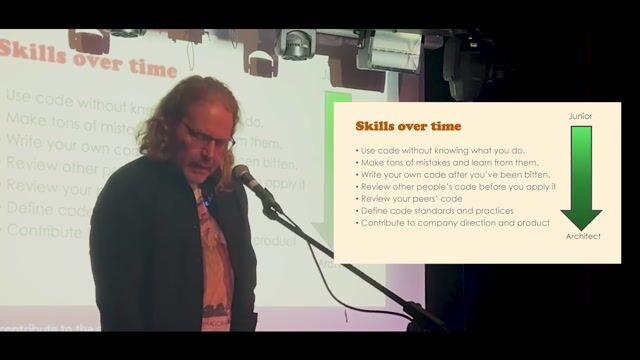

- great growth opportunities. Depending on your ambitions and performance

- a development program that helps you keep growing

- flexible working hours and the opportunity to work from home

- 26 days of paid holiday annually, and the opportunity to purchase 15 additional holiday days annually

- a 32 or 40-hour working week

- the opportunity to take a month of unpaid leave once annually

- the possibility to go on sabbatical for at least 2 months

- a good mobility scheme: choice between a company car with a fuel pass for Europe or the Mobility+ option or a gross cash option with which you arrange all your own transport or a public transport annual subscription

- an iPhone, which is also for personal use

- a laptop with a 4G connection

- a good pension scheme

- an opportunity to take part in our collective health insurance scheme

- an opportunity to benefit from tax-efficient facilities, such as company fitness and a bicycle scheme

What impact will you make?