LIA - Laboratoire d'Informatique d'Avignon

Role details

Job location

Tech stack

Job description

We have recently initiated the development of a framework that integrates Zero Trust Architecture (ZTA) principles into 6G networks. Given that continuous re-authentication, access control, and monitoring can introduce substantial computational and latency overhead-potentially conflicting with the ultra-low latency requirements of 6G-we proposed a Dirichlet-based Zero Trust Model (D-ZTAM) for selective authentication. This model evaluates asset trustworthiness based on behavioral evidence, assesses contextual risk, and dynamically determines ZTA authentication decisions, including access authorization, access denial, or re-authentication triggers. This study highlights that the effective adoption of zero trust principles in 6G will require adaptive mechanisms capable of continuously monitoring the behavior and context of network elements. Accordingly, this PhD thesis will investigate the aforementioned challenges throughout its three-year duration. Implementation: Design of Secure and Reliable Technologies:

-

Methods for Ensuring AI Model Integrity and Verifiability in 6G -Blockchain for AI Security: Blockchain technology offers strong potential for enhancing AI security through data verifiability, traceability, and integrity. It can be leveraged to ensure that AI models deployed in 6G systems remain transparent, auditable, and resistant to tampering.

-

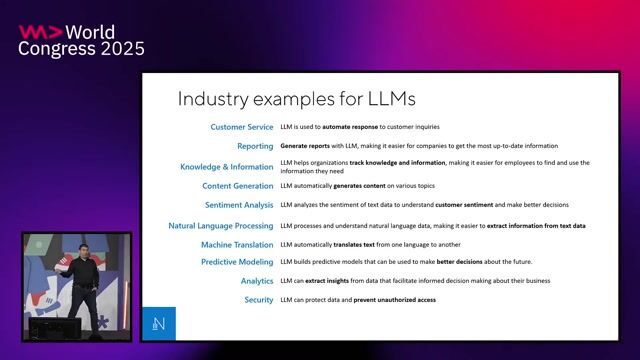

LLM-Based Agents for AI Verification and Trust Management in 6G Large Language Models will be integrated as intelligent verification agents to enhance the integrity, transparency, and trustworthiness of AI-driven 6G systems. These LLM-based agents will operate as supervisory components, monitoring the behavior of AI models deployed across distributed edge environments. Specifically, LLMs will be used to analyze training logs, federated learning updates, execution traces, and contextual metadata in order to detect anomalous behaviors, identify potential model poisoning or tampering attempts, and assess deviations from expected operational patterns. By correlating multi-source evidence across time and network layers, LLM-based agents can support semantic-level anomaly detection that complements statistical and cryptographic verification mechanisms. Finally, LLM-based agents will support trust-aware decision-making by contributing to dynamic trust scoring mechanisms within Zero Trust Architecture frameworks, enabling adaptive security enforcement while respecting 6G latency constraints., I. Objective 1: Integrity and Verifiability of AI Models in 6G The first objective is to ensure the integrity and verifiability of AI models deployed in 6G environments. This objective focuses on addressing two major challenges: data poisoning and AI model ownership and provenance. Challenge 1: Data Poisoning Since AI systems in 6G will rely on federated learning, crowdsourced sensing, digital twin feedback, and data contributions from user devices, adversaries may attempt to inject malicious or falsified data. It is therefore essential to investigate mechanisms for detecting poisoned data, identifying its origin, and establishing trust in distributed user contributions. Challenge 2: Model Ownership and Provenance In 6G, AI models may be developed and deployed by multiple stakeholders, including network operators, municipalities, organizations, or individual entities. Consequently, it becomes crucial to monitor the AI supply chain in order to determine model provenance, assign responsibility, and ensure accountability throughout the model lifecycle. II. Objective 2: LLM-Based Agents for AI Model Verification The second objective is to explore the use of Large Language Model (LLM)-based agents for verifying AI models in 6G environments. AI algorithms will be executed on highly distributed edge devices operating under heterogeneous conditions, resulting in diverse execution behaviors and contextual dependencies. Currently, the role and internal operation of AI models in 6G networks are not fully transparent, despite their involvement in critical functions such as RAN control, network slicing, mobility prediction, and dynamic configuration of reconfigurable intelligent surfaces (RIS). In this context, well-tuned LLM-based agents can assist in inspecting training logs, analyzing model update patterns, detecting abnormal or malicious weight updates, and flagging anomalies during federated learning rounds.

Requirements

Nous recherchons des candidats fortement motivés, prêts à se consacrer à une recherche de haute qualité. Le candidat doit être titulaire d'un master ou équivalent en informatique ou en mathématiques appliquées. Le candidat doit avoir une solide formation théorique en IA, algorithmes d'apprentissage automatique, LLMs, réseautage/informatique en périphérie et éventuellement concepts de blockchain.Solides compétences en codage dans les langues suivantes Python, TensorFlow / PyTorch. ","identifier":{"@type":"PropertyValue","name":"Avignon Université","value":"8564122dcddff48c26233d9d6322c38b"},"url":"https://www.hellowork.com/fr-fr/emplois/76933169.html","datePosted":"2026-03-17T17:48:56Z","directApply":false,"educationRequirements":{"@type":"EducationalOccupationalCredential","credentialCategory":"postgraduate degree"},"employmentType":["TEMPORARY","FULL_TIME"],"experienceRequirements":"no requirements","hiringOrganization":{"@type":"Organization","name":"Avignon Université"},"industry":"Service public d'état","jobLocation":{"@type":"Place","address":{"@type":"PostalAddress","addressCountry":"FR","addressLocality":"Avignon","addressRegion":"Provence-Alpes-Côte d'Azur","postalCode":"84000"}},"qualifications":"Nous recherchons des candidats fortement motivés, prêts à se consacrer à une recherche de haute qualité. Le candidat doit être titulaire d'un master ou équivalent en informatique ou en mathématiques appliquées. Le candidat doit avoir une solide formation théorique en IA, algorithmes d'apprentissage automatique, LLMs, réseautage/informatique en périphérie et éventuellement concepts de blockchain. Solides compétences en codage dans les langues suivantes Python, TensorFlow