Aws Snowflake Data Engineer - Up To €65,000

Role details

Job location

Tech stack

Job description

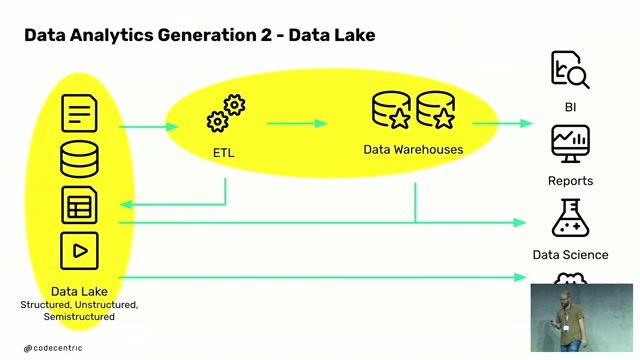

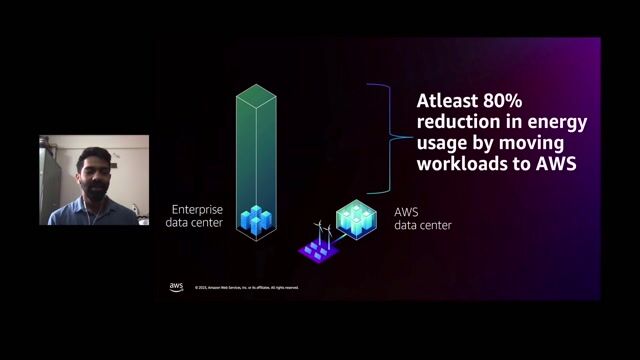

Our client are hiring a Data Engineer to join their growing global team as their grow out their new technology hub in Madrid. In this role, you will design, build, and maintain scalable systems and infrastructure to process and analyse complex, high-volume datasets. You will be working closely with data scientists, analysts, product managers, and other stakeholders, you will translate business needs into robust technical solutions that power innovative data products and services across our business and for our customers. Key ResponsibilitiesDesign, build, and maintain performant batch and streaming data pipelines to support both real-time market data and large-scale batch processing (Apache Airflow, Apache Kafka, Apache Flink).Develop scalable data warehousing solutions using Snowflake and other modern platforms.Architect and manage cloud-based infrastructure (AWS or GCP) to ensure resilience, scalability, and efficiency.Enhance and optimise CI/CD pipelines (Jenkins, GitLab) to streamline development, testing, and deployment.Monitor and maintain the health and performance of data applications, pipelines, and databases using observability tools (Prometheus, Grafana, CloudWatch).Partner with stakeholders across functions to deliver reliable data services and enable analytical product development.Contribute actively in agile ceremonies (stand-ups, sprint planning, retrospectives)

Requirements

Experience & CompetenciesEssential:Experience in Data Engineering.Hands-on experience with AWS, Snowflake, Kubernetes, and Airflow.Experience building and scaling API-driven data platforms (FastAPI).Expertise in Python (with frameworks such as Pandas, Dask, PySpark) and strong SQL skills.Strong understanding of ETL processes and event streaming (Kafka, Flink, etc.).Knowledge of monitoring and alerting systems (Prometheus, Grafana, CloudWatch).Comfortable with Linux and command-line operations. Desired:Exposure to Financial markets data or capital markets environments.