Lead Performance Tester (SDET)

Vonage

Charing Cross, United Kingdom

2 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Charing Cross, United Kingdom

Tech stack

Java

API

Agile Methodologies

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Application Performance Management

Cloud Computing

Code Review

Databases

Continuous Integration

Distributed Systems

Fault Tolerance

Github

Monitoring of Systems

JMeter

Python

Load Testing

Log Analysis

Message Broker

MySQL

Performance Tuning

RabbitMQ

Reliability Engineering

Cloud Services

Software Requirements Analysis

Systems Architecture

Test Data

TypeScript

WebRTC

Scripting (Bash/Python/Go/Ruby)

Performance Testing

Grafana

Concurrency

Gatling

Backend

Event Driven Architecture

Integration Tests

Low Latency

Kafka

Cloudwatch

Api Gateway

REST

SDET

Jenkins

Microservices

Job description

- Define and lead the performance engineering strategy across high-availability, cloud-native telecom platforms.

- Lead, train, and coordinate a distributed guild of SDETs across multiple feature teams, guiding them to build, maintain, and execute performance scripts (k6) within their fractional sprint capacity.

- Design, develop, and evolve scalable backend performance frameworks using k6, Python, Java, or TypeScript, focusing entirely on APIs, databases, and infrastructure.

- Implement and manage production traffic replay strategies (e.g., GoReplay) and complex multi-tenant test data generation to accurately recreate realistic production contention and load profiles.

- Own and drive performance, load, stress, endurance, scalability, and resilience testing, including chaos engineering practices (e.g., using AWS Fault Injection Simulator).

- Validate real-time communication systems, event-driven architectures (Kafka, RabbitMQ), and high-throughput distributed systems under production-like conditions.

- Integrate performance testing into CI/CD pipelines , enabling continuous performance validation, shift-left practices, and automated PR blocking for latency degradation.

- Leverage observability tooling (Grafana, CloudWatch, Coralogix, VictoriaMetrics, PMM) to monitor, analyse, and troubleshoot system performance.

- Establish performance benchmarks , SLAs, SLOs, and error budgets aligned with business and system requirements.

- Collaborate with architecture and data teams to identify bottlenecks, validate database architectures (e.g., MySQL, Tungsten), and present evidence-based investment proposals.

- Analyse and optimise performance across both legacy monolith systems (requiring integrated load testing) and modern microservices architectures.

Requirements

- Proven experience mentoring and coordinating testing efforts across large, distributed engineering teams (e.g., conducting code reviews, guiding SDETs through framework adoption, and managing cross-team test schedules).

- Strong hands-on experience with AWS cloud services (EC2, ECS, RDS, API Gateway) with a focus on scalability and performance optimisation.

- Proven expertise in performance testing tools (specifically k6, along with JMeter or Gatling), including test design, execution, and analysis at scale.

- Hands-on experience with production traffic shadowing or replay tools (such as GoReplay).

- Deep experience testing distributed systems, message brokers (Kafka, RabbitMQ), microservices architectures, and high-volume, low-latency APIs.

- Solid backend programming and scripting skills in Python, Java, or TypeScript to build custom performance frameworks and tooling.

- Strong understanding of system design , concurrency, throughput, latency, and fault tolerance principles.

- Experience implementing performance testing within CI/CD pipelines (Jenkins, GitHub Actions) and enabling continuous performance feedback loops.

- Hands-on experience with observability and monitoring tools (Grafana, Coralogix, PMM) for performance analysis and diagnostics.

- Experience working within Agile environments and translating performance insights into actionable engineering outcomes.

Required

- Demonstrable hands-on experience in backend performance engineering and automation using Python, Java, or TypeScript.

- Proven track record of performance, scalability, and resilience testing in AWS-based cloud-native systems.

- Strong experience designing large-scale performance test strategies and leading QA/SDET teams to execute them.

- Deep understanding of API performance testing, integration testing, and system-level non-functional validation.

- Solid grasp of system architecture, distributed systems behaviour, and non-functional testing methodologies.

- Experience with observability, monitoring, and log analysis tools.

- Familiarity with RESTful APIs and event-driven systems.

Application Requirements

- Updated CV clearly demonstrating performance engineering leadership, cross-team coordination, AWS expertise, and backend automation frameworks.

- Summary of performance testing frameworks, tools (e.g., k6, GoReplay), and CI/CD methodologies implemented.

- Detailed examples of performance testing engagements (scale, environments, bottlenecks identified, business impact, improvements delivered).

- Evidence of driving performance strategy, mentoring QA/SDET teams, and influencing architecture.

#LI-KS1

About the company

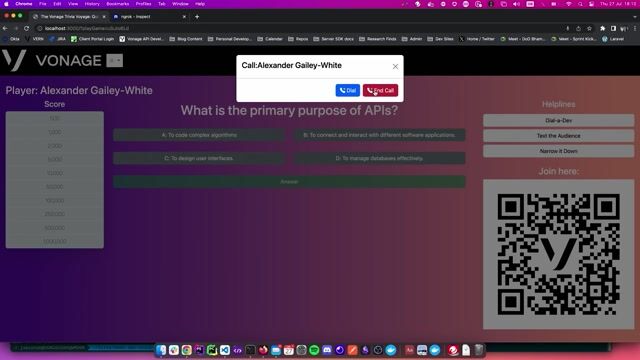

Join Vonage and help us innovate cloud communications for businesses worldwide! What You Will Do, Vonage is a global cloud communications leader. And your talent will further help brands - such as Airbnb, Viber, WhatsApp, and Snapchat - accelerate their digital transformation through our fully programmable-based unified communications, contact center solutions, and communications APIs. Ready to innovate? Then join us today.