Data Engineer (Spark/Kubernetes) (Financial Services)

Role details

Job location

Tech stack

Job description

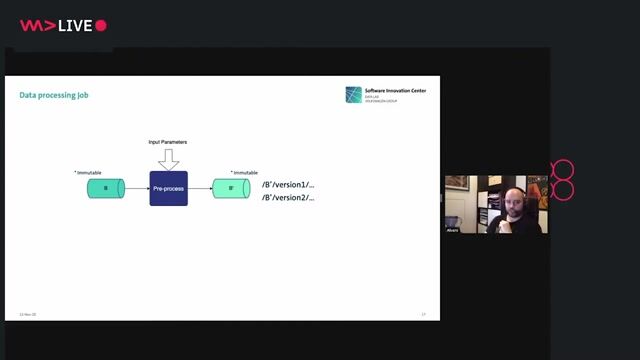

We are seeking a Data Engineer to support the replacement of a Legacy ETL tool with a modern Apache Spark based data platform. This is a hands-on engineering role focused on building and supporting Spark jobs, with an emphasis on performance, reliability, and scalability., The role sits within a small Agile delivery team of four engineers (two onshore and two in Shenzhen), working closely with a Senior Data Engineer. You will be responsible for development work, sprint delivery, demos, documentation, and stakeholder engagement. This position suits a mid-level engineer with strong Spark development experience rather than design, infrastructure, or management responsibilities.

Requirements

- Strong hands-on experience with Apache Spark - Writing and tuning Spark jobs/PySpark development experience.

- Strong experience working in with containerised environments using Kubernetes.

- Experience with programming in Python or Scala

- Exposure to Big Data technologies and distributed data processing

- Have some working knowledge of Java or Java Spring boot development background

- Experienced in an Ops way of working, not pure development only - you know how to deploy solutions.

- Experience with OpenShift would be highly desirable!

- Experience working in an Agile way of working (Scrum, sprints, demos)

- Financial services or professional services experience required.