AWS / ETL Technical Lead

Apetan Consulting

yesterday

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Remote

Tech stack

Java

Airflow

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Big Data

Data Architecture

Information Engineering

Data Governance

Data Integration

ETL

Data Mining

Data Warehousing

DevOps

Hadoop

Python

Scrum

SQL Databases

Workflow Management Systems

Data Logging

Spark

GIT

Data Analytics

Kafka

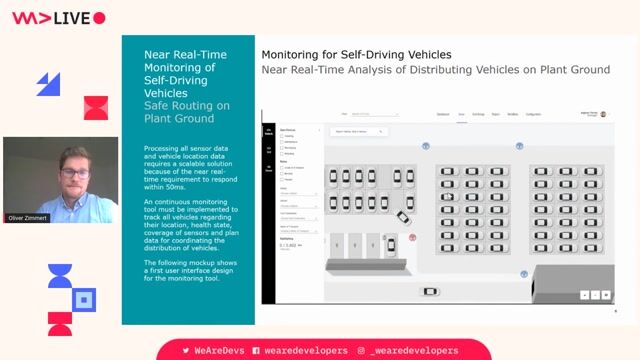

Video Streaming

Software Version Control

Data Pipelines

Serverless Computing

Programming Languages

Job description

We are seeking an experienced AWS / ETL Technical Lead to design, build, and lead scalable data integration and processing solutions on the cloud. You will drive data architecture, oversee ETL development, and guide teams in delivering reliable, high-performance data pipelines., * Lead the design and development of ETL pipelines and data workflows on AWS

- Architect scalable data solutions using cloud-native services

- Oversee data extraction, transformation, and loading processes from multiple sources

- Ensure data quality, integrity, and governance standards are maintained

- Collaborate with data engineers, analysts, and business stakeholders

- Optimize data pipelines for performance, scalability, and cost efficiency

- Implement monitoring, logging, and error-handling mechanisms

- Guide and mentor team members on best practices and technologies

- Participate in sprint planning, estimations, and technical decision-making

Requirements

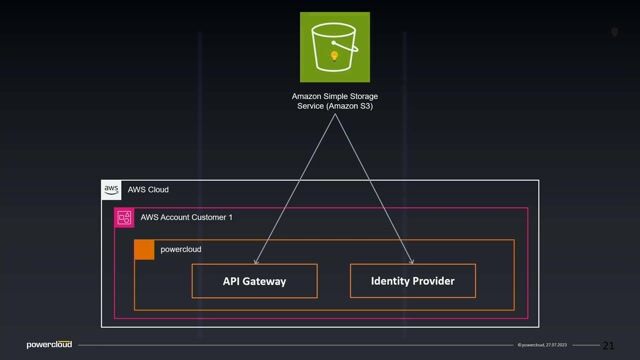

- Strong experience with AWS services such as Glue, S3, Lambda, Redshift, and EMR

- Hands-on experience in ETL development and data pipeline design

- Proficiency in SQL and at least one programming language (Python, Scala, or Java)

- Experience with data warehousing concepts and large-scale data processing

- Familiarity with orchestration tools (Airflow, Step Functions, etc.)

- Strong understanding of data modeling and data architecture

- Experience with version control systems (Git), * Experience with streaming technologies (Kafka, Kinesis)

- Knowledge of big data frameworks (Spark, Hadoop)

- Familiarity with DevOps practices and CI/CD pipelines

- Experience with data governance and security standards

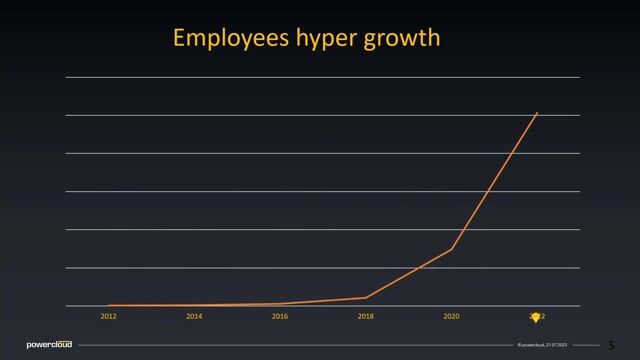

- AWS certifications (e.g., Solutions Architect, Data Analytics Specialty), * 8-12+ years of overall IT experience

- 4-6+ years of experience in AWS and ETL/data engineering roles

- Prior experience in a technical lead or architect role preferred