ETL/ELT - Data Engineer

IDT

29 days ago

Role details

Contract type

Permanent contract Employment type

Full-time (> 32 hours) Working hours

Regular working hours Languages

English Experience level

SeniorJob location

Remote

Tech stack

Microsoft Windows

API

Agile Methodologies

Amazon Web Services (AWS)

Data analysis

Batch Processing

Cloud Computing

Computer Programming

Databases

Information Engineering

Data Integration

Data Integrity

ETL

Data Virtualization

Data Visualization

Data Warehousing

Relational Databases

JSON

Job Scheduling

Python

MySQL

Online Transaction Processing

Open Source Technology

Oracle Applications

Pentaho Data Integration

Performance Tuning

Software Engineering

PL-SQL

SQL Databases

Systems Integration

Data Processing

Azure

Snowflake

Ab Initio

Amazon Web Services (AWS)

Amazon Web Services (AWS)

Real Time Data

Functional Programming

Looker Analytics

Software Version Control

Data Pipelines

Redshift

Job description

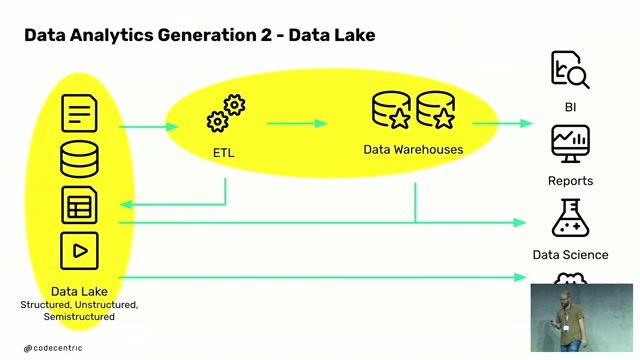

- Design, implement, and validate ETL/ELT data pipelines-for batch processing, streaming integrations, and data warehousing, while maintaining comprehensive documentation and testing to ensure reliability and accuracy.

- Maintain end-to-end Snowflake data warehouse deployments and develop Denodo data virtualization solutions.

- Recommend process improvements to increase efficiency and reliability in ELT/ETL development.

- Stay current on emerging data technologies and support pilot projects, ensuring the platform scales seamlessly with growing data volumes.

- Architect, implement and maintain scalable data pipelines that ingest, transform, and deliver data into real-time data warehouse platforms, ensuring data integrity and pipeline reliability.

- Partner with data stakeholders to gather requirements for language-model initiatives and translate into scalable solutions.

- Create and maintain comprehensive documentation for all data processes, workflows and model deployment routines.

- Should be willing to stay informed and learn emerging methodologies in data engineering, and open source technologies.

Requirements

Do you have experience in Windows?, * 5+ years of experience in ETL/ELT design and development, integrating data from heterogeneous OLTP systems and API solutions, and building scalable data warehouse solutions to support business intelligence and analytics.

- Excellent English communication skills.

- Effective oral and written communication skills with BI team and user community.

- Demonstrated experience in utilizing python for data engineering tasks, including transformation, advanced data manipulation, and large-scale data processing.

- Design and implement event-driven pipelines that leverage messaging and streaming events to trigger ETL workflows and enable scalable, decoupled data architectures.

- Experience in data analysis, root cause analysis and proven problem solving and analytical thinking capabilities.

- Experience designing complex data pipelines extracting data from RDBMS, JSON, API and Flat file sources.

- Demonstrated expertise in SQL and PLSQL programming, with advanced mastery in Business Intelligence and data warehouse methodologies, along with hands-on experience in one or more relational database systems and cloud-based database services such as Oracle, MySQL, Amazon RDS, Snowflake, Amazon Redshift, etc.

- Proven ability to analyze and optimize poorly performing queries and ETL/ELT mappings, providing actionable recommendations for performance tuning.

- Understanding of software engineering principles and skills working on Unix/Linux/Windows Operating systems, and experience with Agile methodologies.

- Proficiency in version control systems, with experience in managing code repositories, branching, merging, and collaborating within a distributed development environment.

- Interest in business operations and comprehensive understanding of how robust BI systems drive corporate profitability by enabling data-driven decision-making and strategic insights., * Experience in developing ETL/ELT processes within Snowflake and implementing complex data transformations using built-in functions and SQL capabilities.

- Experience using Pentaho Data Integration (Kettle) / Ab Initio ETL tools for designing, developing, and optimizing data integration workflows.

- Experience designing and implementing cloud-based ETL solutions using Azure Data Factory, DBT, AWS Glue, Lambda and open-source tools.

- Experience with reporting/visualization tools (e.g., Looker) and job scheduler software.

- Experience in Telecom, eCommerce, International Mobile Top-up.

- Preferred Certification: AWS Solution Architect, AWS Cloud Data Engineer, Snowflake SnowPro Core.

Please attach CV in English. The interview process will be conducted in English. Only accepting applicants from LATAM.

About the company

IDT(www.idt.net) is an American telecommunications company founded in 1990 and headquartered in New Jersey. Today it is an industry leader in prepaid communication and payment services and one of the world's largest international voice carriers. We are listed on the NYSE, employ over 1300 people across 20+ countries, and have revenues in excess of $1.5 billion.