Data Engineer (Snowflake/DBT)

Role details

Job location

Tech stack

Job description

You'll work with the team to help deliver data sources and data capabilities (that is: tools, data sources and data products that enable business teams to find and get the best from our data) across a wide range of technologies and stakeholders.

- It's not purely a technical role, but a hybrid one where you'll be expected to work with business subject matter experts to understand requirements, assist in gathering and collating of information and formulating the approach along with the team. You may not have worked with every technology in our stack, which will be an opportunity for you to learn something new and ground-breaking. You'll be responsible for contributing to the analysis, implementation andtesting of working data pipelines required by the Product Owner., Nunca debes compartir tus datos bancarios ni fotos de tus documentos al solicitar un empleo. Si tienes alguna duda sobre un proceso de selección En esta oferta serás redirigido a la pagina web de la empresa. Completa el formulario en su web.

-

Madrid (Híbrido) Ubicación

-

Big Data Funciones

-

Jornada completa Jornada

-

3 años Experiencia

-

Indefinido Tipo contrato

-

snowflake data ETL Python

Requirements

You'll be familiar with agile methodology, scrum or Kanban and have worked in teams that use this approach. As a team, we support each other in our personal development knowing that each has their strengths, and work to share those throughout the team.

Mandatory requirements:

-

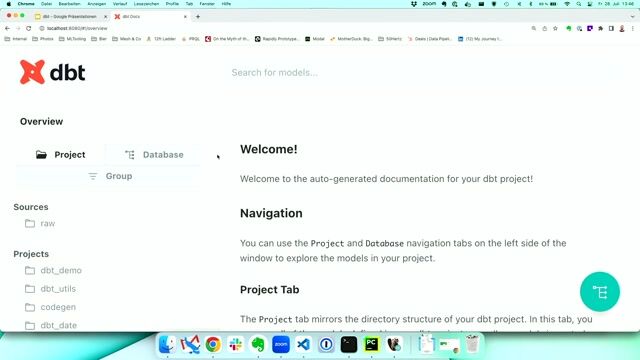

Experience with Snowflake and DBT

-

Experience in Python, specifically within Data Engineering, in a commercial setting

-

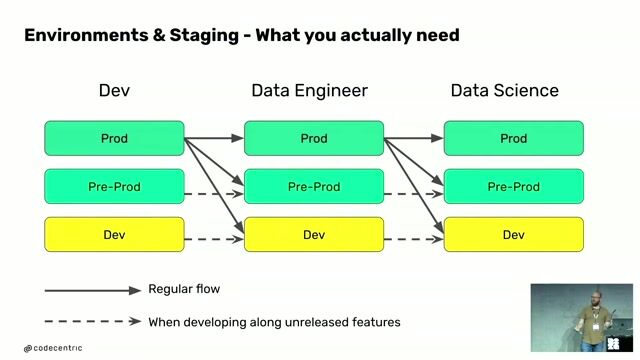

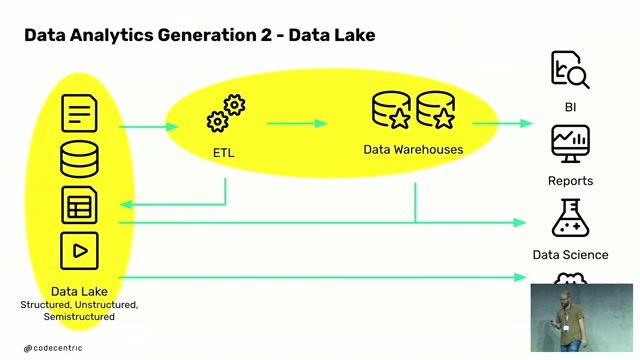

Excellent understanding of ETL/ELT patterns, idempotency and other data engineering best practices.

-

Experience with data modelling (3rd normal form, star schemas, wide/tall projections)

-

Excellent SQL knowledge, including an understanding of how to write optimized SQL code, good general knowledge of different SQL engines, and what considerations they bring when optimizing.

-

Practical understanding of how to profile SQL and manage performance trade-offs.

-

Knowledgeable in how to build data pipelines that robustly handle different possible modes of failure and maintain Metadata.

Desirable

-

Educated to undergraduate or higher level in a numerate or applied science subject, but relevant industry experience will be equally valuable and considered.

-

Snowflake or DBT Certifications

-

Demonstrable experience of Engineering in a cloud-based environment, especially with AWS or Azure

-

Any experience of meta data driven Engineering

-

Any experience of integrating Data Warehouse/Data pipelines with Data Governance tools like Collibra, snowflake,data modeling,data lake,data warehousing

Benefits & conditions

`Retribución Flexible´ Program: (Meals, Kinder Garden, Transport, online English lessons, Heath Care Plan…)

-Free access to several training platforms

-Professional stability and career plans

-UST also, compensates referrals from which you could benefit when you refer professionals.

-The option to pick between 12 or 14 payments along the year.

-Real Work Life Balance measures (flexibility, WFH or remote work policy, compacted hours during summertime…)

-UST Club Platform discounts and gym Access discounts

If you would like to know more, do not hesitate to apply and we'll get in touch to fill you in details. UST is waiting for you!

In UST we are committed to equal opportunities in our selection processes and do not discriminate based on race, gender, disability, age, religion, sexual orientation or nationality. We have a special commitment to Disability & Inclusion, so we are interested in hiring people with disability certificate.